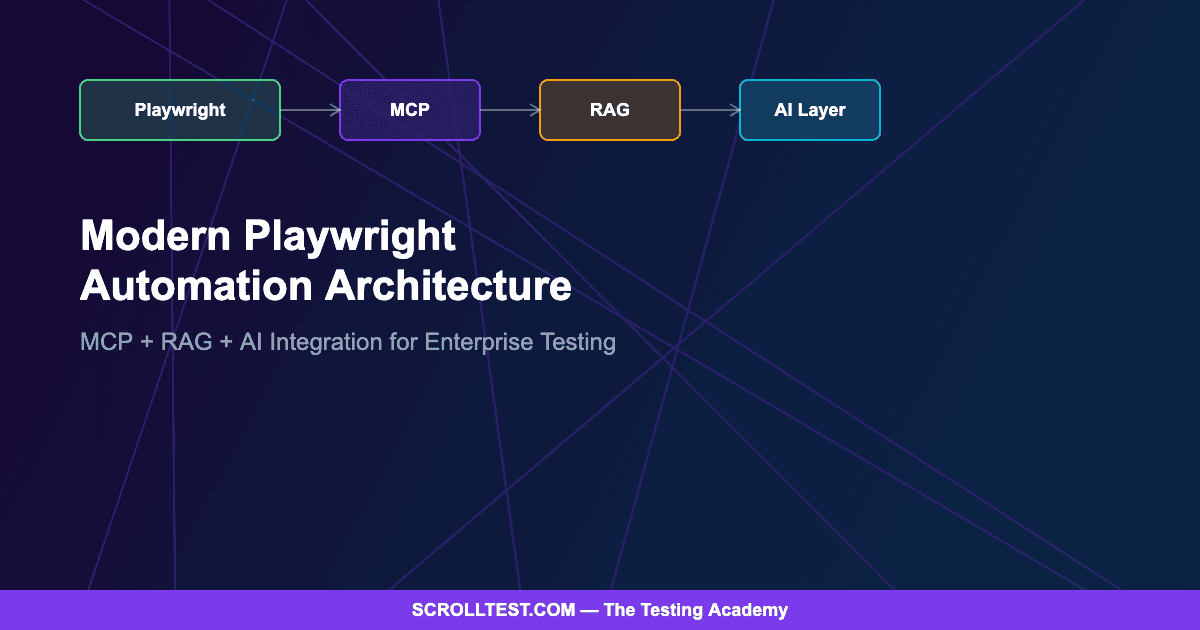

The Modern Playwright Automation Architecture: How to Integrate MCP, RAG, and AI for Enterprise Testing

Playwright has evolved far beyond a browser automation library. In 2026, the most effective QA teams are building intelligent testing ecosystems that combine Playwright with Model Context Protocol (MCP), Retrieval-Augmented Generation (RAG), and AI-assisted test generation. This guide breaks down the complete architecture — from first install to production-grade AI-augmented testing — with real code patterns and honest trade-offs.

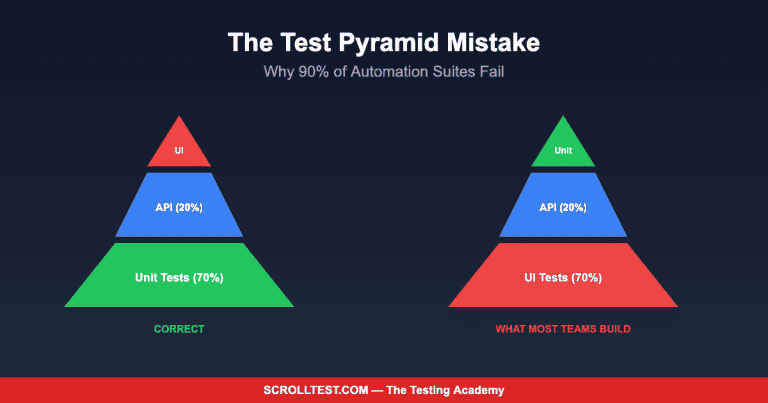

I have been writing about AI-powered testing for the better part of two years now, and one pattern keeps surfacing in every conversation I have with senior SDETs: the gap between “we use Playwright” and “we have a testing ecosystem” is widening. Most teams are still writing Playwright tests the same way they wrote Selenium tests five years ago — procedural scripts, hardcoded selectors, and a prayer that the CI pipeline stays green. Meanwhile, the teams pulling ahead are treating their test infrastructure as a platform, not a script collection.

This article is the architecture guide I wish existed when I started building AI-augmented test systems. It covers the full stack: Playwright as the execution engine, MCP as the centralized data and context layer, RAG for intelligent test generation, and CI/CD as the orchestration backbone.

Contents

The Foundation: Playwright as Your Execution Engine

Everything starts with Playwright’s core architecture. Unlike older frameworks, Playwright communicates with browsers over the Chrome DevTools Protocol (CDP) for Chromium and equivalent protocols for Firefox and WebKit. This means your test code has direct, low-level access to the browser engine — not a translation layer sitting on top of WebDriver.

The practical implication is speed and reliability. Playwright’s auto-waiting mechanism eliminates the single largest source of test flakiness in traditional frameworks: timing issues. When you write await page.click('#submit'), Playwright automatically waits for the element to be visible, enabled, and stable before clicking. No explicit waits. No sleep statements. No flaky retries.

Your project structure should reflect the platform mindset from day one. Separate your test scenarios from your page objects, your fixtures from your utilities, and your test data from your configuration. A well-organized Playwright project looks like this: tests/ for spec files organized by feature, pages/ for page object models, fixtures/ for shared setup and teardown, utils/ for helper functions, and config/ for environment-specific settings.

Layer Two: Model Context Protocol (MCP) as Your Data Backbone

MCP is the piece most teams are missing. Model Context Protocol provides a standardized way for AI models to access external data sources, tools, and context. In a testing context, MCP becomes the centralized layer that manages test data, environment configuration, and the bridge between your AI tools and your test execution engine.

Think of MCP as the nervous system of your testing architecture. Instead of hardcoding test data in JSON fixtures or pulling from a shared database, MCP provides a protocol for your tests (and your AI assistants) to request exactly the context they need. Need a valid user credential for a staging environment? MCP serves it. Need the current feature flag state for your test environment? MCP knows. Need to understand what changed in the last deployment so you can prioritize which tests to run? MCP connects to your deployment pipeline and provides that context.

The architecture pattern works like this: your Playwright tests connect to an MCP server that aggregates data from multiple sources — your test data store, your environment configuration, your CI/CD pipeline state, and your defect tracking system. When a test needs context, it queries MCP through a standardized protocol rather than reaching directly into databases or config files. This decoupling means you can swap data sources without changing test code.

Layer Three: RAG for Intelligent Test Generation

This is where the architecture becomes genuinely interesting. Retrieval-Augmented Generation combines a large language model with a retrieval system that pulls relevant documents from your own codebase, test history, and domain knowledge. In testing, RAG means your AI assistant does not generate tests from general training data — it generates tests grounded in your specific application, your existing test patterns, and your historical defect data.

The setup requires three components: a vector database (Pinecone, Weaviate, or even a local ChromaDB instance), an embedding model to convert your test artifacts into vectors, and an LLM to generate test code based on retrieved context. You feed the system your existing Playwright tests, your page objects, your application documentation, and your bug reports. When you ask it to generate a new test, it retrieves the most relevant existing patterns and generates code that matches your style, uses your page objects, and targets the areas where bugs have historically appeared.

The honest reality is that RAG-generated tests still require human review. In my experience, roughly 60-70% of generated tests are usable with minor modifications, about 20% need significant rework, and 10% are unusable. But that 60-70% represents a massive productivity gain, especially for regression test expansion where you need broad coverage across similar user flows.

CI/CD Integration: Orchestrating the Ecosystem

The CI/CD layer ties everything together. Your pipeline should handle three distinct concerns: running your existing Playwright tests on every commit, using MCP to determine which tests are most relevant to the changes being deployed, and optionally triggering RAG-based test generation for new features that lack coverage.

GitHub Actions has become the default choice for most teams, and for good reason. A well-configured workflow installs Playwright browsers, runs your test suite in parallel across multiple shards, captures trace files for failures, and publishes HTML reports as artifacts. The key addition for the AI-augmented stack is a pre-test step that queries MCP for deployment context and adjusts the test execution plan accordingly.

Jenkins remains relevant for enterprises that need on-premise execution or have complex pipeline orchestration requirements. Docker containerization ensures consistent test environments regardless of which CI system you use — your Playwright tests run inside a container with pinned browser versions, eliminating “works on my machine” failures.

AI-Assisted Testing: GitHub Copilot and Beyond

The AI assistance layer operates at a different level than RAG. While RAG generates complete test scenarios, AI copilots like GitHub Copilot, Cursor, and ChatGPT assist with moment-to-moment coding decisions: autocompleting locator strategies, suggesting assertion patterns, refactoring page objects, and optimizing test logic. These tools work best when your codebase is well-structured because they use your existing code as context for their suggestions.

The practical workflow is straightforward. You write the test description as a comment, the AI suggests the implementation. You write the page object interface, the AI fills in the locator strategies. You write the first test in a pattern, the AI replicates that pattern for the remaining scenarios. The quality of suggestions scales directly with the quality of your existing code — which is why the foundation and structure layers matter so much.

The Honest Caveats

This architecture is not trivial to implement. MCP is still an evolving protocol, and production-grade implementations require engineering investment that many teams cannot justify without clear ROI projections. RAG-based test generation sounds transformative on paper, but the 60-70% usability rate means you still need senior engineers reviewing and correcting generated tests — it is acceleration, not automation.

The cost implications are real. Vector databases have hosting costs. LLM API calls for RAG generation accumulate. Running AI-augmented pipelines takes longer than pure Playwright execution. Teams should start with the foundation (well-structured Playwright + CI/CD) and add MCP and RAG layers incrementally, measuring the actual productivity gains at each step.

I also want to be transparent about sample size. The usability statistics I cited come from my own experience and conversations with approximately two dozen teams. This is not peer-reviewed research. Your mileage will vary depending on application complexity, codebase quality, and team skill level.

Building This Architecture Incrementally

The biggest mistake teams make is trying to implement all four layers simultaneously. Start with Playwright fundamentals: solid project structure, reliable page objects, and a green CI pipeline. Once that foundation is stable, add MCP for centralized test data management. Only then should you experiment with RAG for test generation and AI copilot integration.

The teams that succeed with this architecture share one trait: they treat their test infrastructure as a product with its own roadmap, its own quality standards, and its own iteration cycles. The tools are secondary to the mindset. Playwright, MCP, RAG, and AI copilots are powerful individually, but their real value emerges when they are integrated into a coherent ecosystem designed around your team’s specific testing challenges.

Building this kind of AI-augmented testing architecture — from your first Playwright project to a full MCP + RAG pipeline — is exactly what I cover in the advanced track of my AI-Powered Testing Mastery course. The architecture patterns in this article form the backbone of Modules 8 through 12.