Why 90% of Test Automation Suites Fail: The Test Pyramid Mistake Every QA Team Makes

The test pyramid is the most cited and least followed principle in test automation. Most teams know they should have more unit tests than UI tests, yet the majority of automation suites I audit are inverted — heavy on fragile end-to-end tests, light on fast unit and API tests. This article dissects why the inversion happens, what it costs, and how to fix your test distribution without starting over.

I have audited test suites for more than forty teams over the past three years, and the pattern is remarkably consistent. A team decides to invest in test automation. They hire an SDET or assign a developer to the task. That person starts with what is most visible and most requested by stakeholders: end-to-end UI tests. Six months later, they have 300 Selenium or Playwright tests, a CI pipeline that takes 45 minutes, a flaky test rate above 10%, and a growing sense that automation is not delivering on its promise.

The root cause is almost always the same: they built the pyramid upside down.

Contents

What the Test Pyramid Actually Means

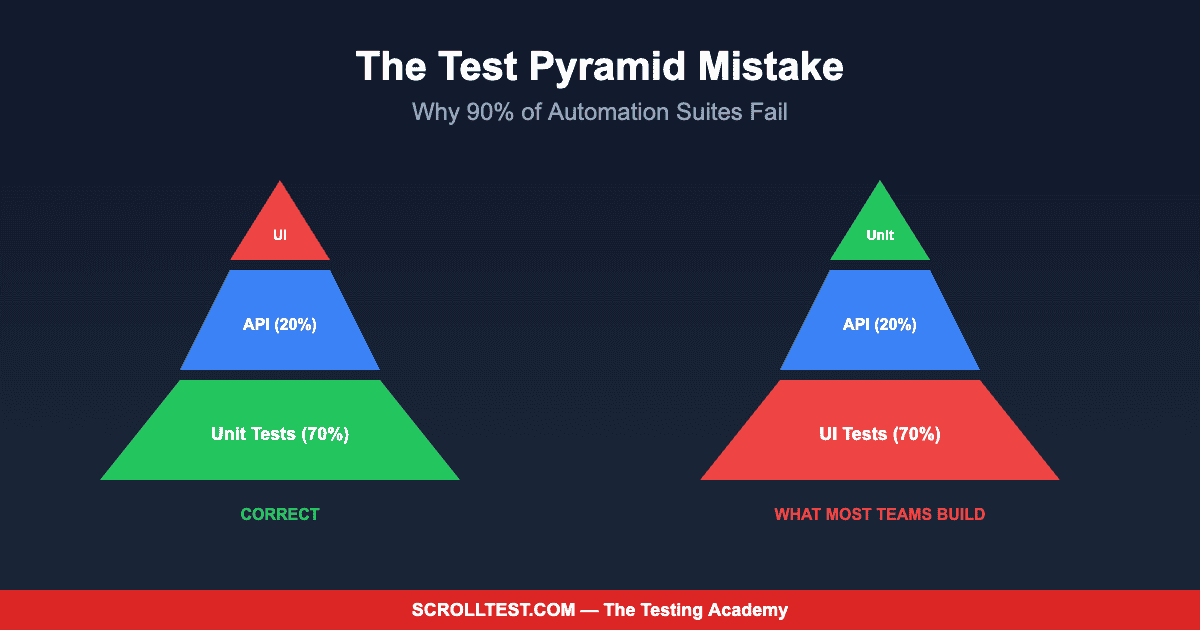

Mike Cohn introduced the test pyramid in 2009, and the concept is elegant in its simplicity. The pyramid has three layers. The base — the widest layer — is unit tests. These are fast, cheap to write, cheap to maintain, and they run in milliseconds. They test individual functions, methods, and classes in isolation. The middle layer is integration or API tests. These test how components interact, validate business logic across service boundaries, and run in seconds. The top layer — the narrowest — is UI or end-to-end tests. These simulate real user journeys through the browser, they are slow, expensive, and inherently fragile.

The pyramid shape communicates a ratio. You should have many more unit tests than API tests, and many more API tests than UI tests. A commonly cited target ratio is 70% unit, 20% API, 10% UI. The exact numbers vary by application type, but the principle holds: invest most heavily in the fastest, most stable test type and use the slower, more fragile types sparingly for what only they can validate.

Why Teams Invert the Pyramid

The inversion is not a technical failure — it is an organizational one. Three forces consistently push teams toward UI-heavy automation. First, stakeholders understand UI tests because they can watch them run. A browser opening, clicking buttons, and filling forms is tangible in a way that a unit test output is not. When a VP asks “show me what our automation does,” nobody pulls up a Jest console output. They demo the Selenium suite.

Second, QA teams often lack access to the application codebase. They are given a deployed environment and asked to automate against it. Their only interface is the browser. Writing unit tests requires access to source code, understanding of internal architecture, and collaboration with developers — none of which is guaranteed in organizations that treat QA as a separate function rather than an integrated discipline.

Third, the tooling incentives are misaligned. The test automation industry markets browser automation tools, not unit testing frameworks. Conferences feature Playwright and Selenium workshops, not pytest and JUnit deep dives. Career paths reward “automation engineers” who build UI frameworks, not QA engineers who write comprehensive unit test suites.

The Real Cost of an Inverted Pyramid

An inverted pyramid is expensive in ways that are not immediately obvious. The first cost is pipeline duration. A suite of 500 UI tests running sequentially takes 30-60 minutes. Even with aggressive parallelization, you are looking at 10-15 minutes. Developers waiting 15 minutes for CI feedback change their behavior — they batch commits, skip running tests locally, and merge without waiting for green. Fast feedback enables good engineering practices. Slow feedback degrades them.

The second cost is maintenance burden. UI tests break when the interface changes, even if the underlying functionality is untouched. A CSS class rename, a button relocation, a modal redesign — each of these triggers test failures that require investigation and repair but represent zero actual defects. In my audits, I have found that teams with inverted pyramids spend 40-60% of their automation effort on maintenance rather than new coverage.

The third cost is trust erosion. When the test suite fails frequently for reasons unrelated to real bugs, the team stops trusting the results. Failures get ignored. Retries become the default. The “rerun and hope” pattern takes hold. At that point, you have an automation suite that runs but does not inform — which is worse than having no automation at all, because it consumes resources while providing false confidence.

Auditing Your Current Test Distribution

Before you can fix the pyramid, you need to know how inverted yours is. Count the tests at each layer. Calculate the execution time at each layer. Track the failure rate at each layer over the past month, separating genuine bug detections from false failures (environment issues, timing problems, test data corruption). The resulting data will tell you exactly where your automation investment is concentrated and where it is wasted.

Most teams I work with discover that their UI tests account for 60-80% of total tests, 90% of execution time, and 85% of false failures. The unit test layer, if it exists at all, covers only the code that individual developers happened to test rather than a systematic coverage strategy. The API test layer is typically the smallest, despite being the layer with the best cost-to-value ratio for most modern applications.

Fixing the Pyramid: A Practical Roadmap

The fix is incremental, not revolutionary. Do not delete your existing UI tests. Instead, stop adding new ones unless they validate something that only a browser test can validate — visual rendering, complex user interactions, critical purchase or signup flows. For everything else, push the test down to a lower layer.

Start by identifying UI tests that are actually testing API behavior through the browser. A test that fills a form, submits it, and checks a success message is mostly testing the API endpoint, not the UI. Replace it with a direct API test that sends the same payload and validates the response. The UI test can be simplified to verify that the form renders correctly and the success message displays — a much lighter test that breaks less often.

Next, work with your development team to establish unit testing standards. Define minimum coverage targets for new code. Integrate unit test execution into the PR review process so that no code merges without tests. This is a cultural change as much as a technical one, and it requires buy-in from engineering leadership, not just the QA team.

For the API layer, tools like REST Assured (Java), pytest with requests (Python), and Playwright’s built-in API testing (TypeScript) make it straightforward to build comprehensive API test suites. These tests run in seconds, rarely produce false failures, and validate the business logic that matters most.

The Honest Caveats

The 70/20/10 ratio is a guideline, not a rule. Some applications — particularly those with complex visual interfaces, drag-and-drop interactions, or real-time collaborative features — legitimately need a higher proportion of UI tests. The test pyramid is a heuristic, and heuristics have exceptions.

My audit data comes from teams that sought help with their automation strategy, which introduces selection bias. Teams with well-balanced pyramids do not hire consultants to audit their test distribution. The inversion rate I observe (approximately 80% of audited teams) is likely higher than the industry average.

Fixing the pyramid also requires organizational authority that QA teams do not always have. Mandating unit test coverage requires developer cooperation. Accessing API endpoints for direct testing requires documentation and environment access. These are political challenges as much as technical ones, and this article cannot solve your organization’s collaboration dynamics.

Why This Matters More in 2026

The test pyramid principle is seventeen years old, and it matters more now than when it was introduced. Modern applications are more complex, deployment cycles are faster, and the cost of slow feedback is higher in a continuous delivery world. AI-assisted test generation is making it easier to create tests at every layer, but it is also making it easier to generate the wrong tests at the wrong layer if your strategy is not clear.

The teams that will thrive in the next wave of QA evolution are the ones with balanced pyramids: fast unit tests catching logic errors in milliseconds, comprehensive API tests validating business rules in seconds, and a thin layer of targeted UI tests confirming that the user experience works end-to-end. That is not a new insight. It is a proven one that most teams still have not implemented.

Building a properly balanced test pyramid — from unit testing fundamentals to API test architecture to strategic UI coverage — is the core curriculum of my AI-Powered Testing Mastery course. Module 5 includes the exact audit template I use with consulting clients to diagnose and fix inverted pyramids.