Flaky Tests Are Killing Your Pipeline: The Complete Guide to Detection, Quarantine, and Prevention in 2026

Contents

The 3 AM Slack Message That Changed How I Think About Test Automation

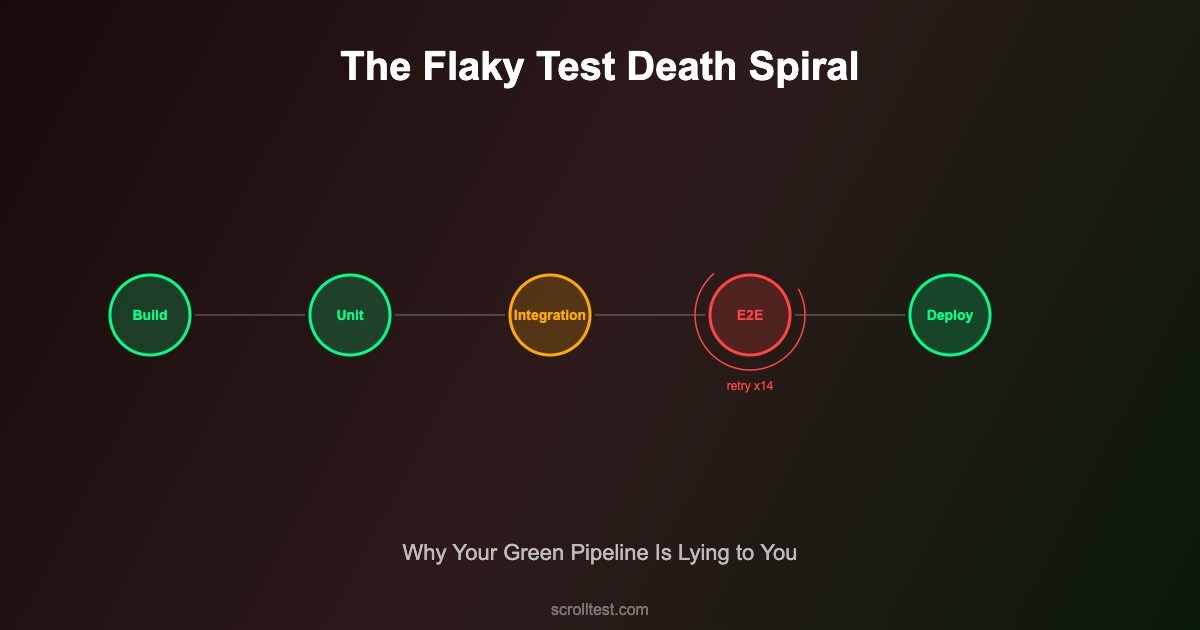

Last October, our deployment pipeline went red at 2:47 AM. The on-call engineer — a senior SDET with six years of experience — spent 90 minutes investigating. She pulled logs, re-ran the suite, checked for infrastructure issues, compared environment configs. At 4:22 AM, she pushed a commit with the message: “retry flaky checkout test, env timing issue.”

The next morning, the same test failed again. Different build. Different branch. Same confident green checkmark after a retry.

Two weeks later, a real payment bug shipped to production. The test that should have caught it? It had been silently retried and auto-passed 14 times in the previous month. Nobody noticed because the pipeline stayed green.

This is not a rare story. This is the most common story in test automation, and it is costing engineering teams far more than they realize.

The Flaky Test Crisis Nobody Talks About Honestly

Flaky tests are automated tests that produce inconsistent results — passing and failing without any code change. Every team knows they exist. Most teams tolerate them. Very few teams understand how much damage they actually cause.

The numbers are staggering. Research from Microsoft found that roughly 25% of test failures in large-scale CI systems are caused by flaky tests rather than actual code defects. Google’s internal data paints an even worse picture — nearly 84% of retried test failures were flakiness, not genuine regressions. Slack’s engineering team reported that flaky tests accounted for 56.76% of their CI failures before they invested in dedicated remediation.

A five-year industrial case study found that dealing with flaky tests consumed at least 2.5% of total productive developer time. For a team of 50 developers, that translates to losing an entire person-year annually — not building features, not fixing real bugs, just chasing ghosts in the test suite.

But the real cost is not time. It is trust.

When your pipeline cries wolf enough times, developers stop listening. They stop investigating failures. They hit “re-run” reflexively. They add retry logic and move on. And when a genuine regression finally appears, it hides behind the same “probably just flaky” assumption that let 14 previous failures pass unnoticed.

59% of developers encounter flaky tests at least monthly. The proportion of teams experiencing test flakiness grew from 10% in 2022 to 26% in 2025. This is not a problem that is getting better on its own.

The Five Root Causes (and Why Most Teams Only Fix Two)

Most flaky test guides give you a laundry list of causes. That is not useful. What is useful is understanding the five structural root causes and knowing which ones your team is actually equipped to fix versus which ones require architectural changes.

1. Timing and Synchronization Failures

This is the root cause everyone knows about. A test clicks a button before the page finishes loading. An API call returns before the database write completes. A WebSocket event arrives after the assertion runs.

Most teams fix this by adding explicit waits or sleep statements. That is the wrong fix. It trades flakiness for slowness, and it does not actually solve the synchronization problem — it just makes the timing window larger.

The correct fix is using framework-native wait mechanisms. Playwright’s auto-wait handles this at the framework level — tests wait for elements to be actionable before interacting with them. Teams that migrate from Selenium to Playwright report up to 50% fewer flaky tests primarily because of this single architectural difference.

// Bad: Arbitrary sleep

await page.waitForTimeout(3000);

await page.click('#submit');

// Better: Explicit wait for specific condition

await page.waitForSelector('#submit', { state: 'visible' });

await page.click('#submit');

// Best: Playwright auto-wait (built-in)

await page.click('#submit'); // automatically waits for actionable2. Test Data Contamination

Test A creates a user. Test B expects to find exactly 5 users in the database. Test A runs before Test B on Monday. Test B runs first on Tuesday. Monday: green. Tuesday: red. Nobody changed anything.

Shared mutable state between tests is the second most common cause of flakiness, and it is harder to fix than timing issues because it requires changing how you think about test isolation. Each test must create its own data, operate on it, and clean it up — or better yet, run against a fresh, isolated data context every time.

// Bad: Shared test data

test('should display user list', async () => {

const users = await api.getUsers(); // depends on whatever other tests left behind

expect(users.length).toBe(5);

});

// Good: Self-contained test data

test('should display user list', async () => {

// Arrange: create exactly what this test needs

const testUsers = await Promise.all(

Array.from({ length: 5 }, (_, i) =>

api.createUser({ name: `test-user-${Date.now()}-${i}` })

)

);

const users = await api.getUsers({ createdBy: testUsers[0].batchId });

expect(users.length).toBe(5);

// Cleanup

await Promise.all(testUsers.map(u => api.deleteUser(u.id)));

});3. Environment and Infrastructure Instability

Your test passes locally, fails in CI. Passes in CI on the first run, fails on the second. Passes on Monday morning, fails on Friday afternoon when the shared staging database is under heavier load.

Infrastructure flakiness is the root cause most teams underinvest in. The test code is correct. The application code is correct. The environment is the variable. Docker containers that do not fully initialize, shared test databases with connection pool exhaustion, CI runners with varying CPU allocation, network latency between services — all of these introduce non-determinism that no amount of test code improvement will fix.

SRE best practices show that when teams treat test infrastructure with production-grade standards — monitoring, reproducible environments, resource guarantees — they see 20% fewer false test failures.

4. Non-Deterministic Application Behavior

Animations, random ordering, timestamps, UUIDs, A/B test variants, third-party widgets that load asynchronously — your application itself may be non-deterministic by design. Testing deterministic outcomes against non-deterministic behavior is a structural mismatch.

The fix here is not in the tests. It is in building testability into the application: deterministic modes for animations, seeded random generators, clock mocking, and feature flag controls that lock behavior during test runs.

5. Test Suite Ordering Dependencies

Tests that pass when run in a specific order but fail when shuffled are not just flaky — they are ticking time bombs. Parallel execution, sharding across CI nodes, or a simple reorder during refactoring will detonate them.

Most teams fix causes 1 and 2 because those fixes live inside individual test files. Causes 3, 4, and 5 require cross-team collaboration, infrastructure investment, and application architecture changes. That is why most teams only fix half the problem.

The Detect-Quarantine-Fix-Prevent Framework

Here is the framework I have used across three organizations to take flaky test rates from double digits down to under 2%. It is not complicated. It requires discipline.

Phase 1: Detect — Find Your Flaky Tests Systematically

Stop relying on developers to report flakiness. They will not. They will retry and move on. You need automated detection.

Run tests multiple times. The simplest detection method is running your test suite 10+ times against the same commit. Any test that fails at least once but passes at least once is flaky. Playwright has this built in:

# Run each test 10 times and flag inconsistent results

npx playwright test --repeat-each=10 --reporter=json > results.jsonTrack flakiness scores over time. Every test run should feed into a tracking system. A test that fails once in 100 runs has a 1% flakiness rate. A test that fails 8 times in 100 runs has an 8% flakiness rate. Both are flaky, but the second one needs immediate attention.

Use CI-native detection. Most modern CI systems (GitHub Actions, GitLab CI, Jenkins) either have built-in flaky test detection or support plugins that track test outcomes across runs and flag non-deterministic results automatically.

Tools like BuildPulse, Currents, and TestDino specialize in ingesting test results across hundreds of runs and providing flakiness scores, trend analysis, and automated classification.

Phase 2: Quarantine — Stop the Bleeding

Once identified, flaky tests must be immediately quarantined. This is not optional and it is not procrastination. A flaky test blocking your main pipeline is actively causing harm — developers lose trust, builds get delayed, and real failures hide behind assumed flakiness.

Quarantining means moving the test to a separate, non-blocking test run. The main pipeline stays green based on reliable tests only. The quarantined tests run in parallel, their results tracked but not gating deployments.

# playwright.config.ts - Using grep to separate quarantined tests

export default defineConfig({

projects: [

{

name: 'stable',

testMatch: /.*\.spec\.ts/,

testIgnore: /.*\.quarantine\.spec\.ts/,

},

{

name: 'quarantine',

testMatch: /.*\.quarantine\.spec\.ts/,

retries: 2,

},

],

});Critical rule: every quarantined test gets a ticket with an owner and a deadline. Without accountability, quarantine becomes a graveyard where flaky tests go to be forgotten.

Phase 3: Fix — Address the Actual Root Cause

This is where the five root causes framework becomes practical. For each quarantined test, diagnose which of the five causes applies and fix accordingly:

Timing issues: Replace sleeps and arbitrary waits with event-driven waits. In Playwright, use waitForResponse, waitForLoadState, or custom wait utilities that poll for specific conditions.

Data contamination: Isolate test data. Use unique identifiers per test run. Implement setup/teardown that creates and destroys test state. Consider database transactions that roll back after each test.

Infrastructure: Containerize test environments. Pin CI runner specs. Use dedicated test databases. Monitor resource utilization during test runs.

Non-deterministic app behavior: Add deterministic modes. Mock external dependencies. Freeze time. Disable animations in test environments.

Ordering dependencies: Run tests in random order regularly. Use --shard in Playwright or parallel execution in your framework to surface order dependencies early.

Phase 4: Prevent — Make Flakiness Visible Before Merge

The most overlooked phase. Prevention means catching flakiness before it enters your main branch.

Run new tests multiple times in PR checks. Any test added or modified in a PR should run 5-10 times before merge. If it fails even once, it does not merge.

# GitHub Actions: Run modified tests multiple times

- name: Detect flaky new tests

run: |

CHANGED_TESTS=$(git diff --name-only origin/main...HEAD | grep '\.spec\.ts$')

if [ -n "$CHANGED_TESTS" ]; then

npx playwright test $CHANGED_TESTS --repeat-each=5

fiSet a flakiness budget. Define a maximum acceptable flaky test rate (under 1% is a strong target) and track it as a team metric. When the budget is exceeded, new feature work pauses until flakiness is brought back under threshold. This sounds extreme until you realize the alternative is slow erosion of your entire test suite’s credibility.

Review test code like production code. Flaky tests often originate from shortcuts in test code — hardcoded timeouts, shared state, missing assertions. Code review for test files should be as rigorous as for application code.

What This Framework Cannot Fix

I want to be honest about the limitations because most flaky test guides oversimplify this.

Third-party dependencies will always introduce some flakiness. If your tests hit external APIs, payment gateways, or third-party services, you will have non-determinism. Mocking helps but introduces its own risks — your mocks drift from reality. Contract testing tools like Pact help bridge this gap, but some residual flakiness from external dependencies is a reality you manage, not eliminate.

Zero flakiness is not a realistic goal. Aiming for 0% flakiness is like aiming for 100% test coverage — it sounds good in a presentation and wastes enormous effort in practice. The goal is a flakiness rate low enough that developers trust the pipeline and investigate every failure. Under 1% is excellent. Under 2% is very good. Above 5% is where trust erodes.

Retries are a treatment, not a cure. Playwright and most frameworks support automatic retries. Use them — but understand what they are. A test that needs retries to pass consistently is still broken. Retries should be a diagnostic tool (a test that passes on retry is likely flaky) not a permanent workaround. The BrowserStack team puts it well: set retries to 0 or 1 in your main pipeline. If a test needs more than one retry, it needs fixing, not retrying.

This requires organizational commitment, not just technical skill. A solo SDET can quarantine and fix individual flaky tests. But preventing flakiness at scale requires infrastructure investment, application testability improvements, and a team culture that treats test reliability as a first-class priority. Without management buy-in, you are playing whack-a-mole.

Your Action Plan: What to Do This Week

If flaky tests are a problem on your team (and statistically, they probably are), here is what to do — in order, starting today.

Day 1: Measure your current flakiness rate. Run your full test suite 10 times against the same commit. Count how many tests produce inconsistent results. Divide by total tests. That is your flakiness rate. Write it down. You cannot fix what you do not measure.

Day 2-3: Quarantine the top offenders. Take the 10 most frequently flaky tests and move them to a quarantine suite. Create a ticket for each with an owner. Set a two-week deadline for investigation. Immediately, your main pipeline becomes more trustworthy.

Day 4-5: Diagnose root causes. For each quarantined test, determine which of the five root causes applies. Run the test in isolation. Run it with verbose logging. Run it 50 times and look for patterns. Is it always the same assertion that fails? Does it fail more often on slower CI runners? Does it fail when another specific test runs first?

Week 2: Fix and validate. Fix each quarantined test according to its root cause. Run the fixed test 50 times. If it passes every time, move it back to the main suite. If it still fails, the diagnosis was wrong — dig deeper.

Week 3: Implement prevention. Add the multi-run PR check for new tests. Set up flakiness tracking in your CI system. Define your flakiness budget. Share the dashboard with the team.

Ongoing: Treat flakiness as a team metric. Review flakiness rate in sprint retrospectives. Celebrate when it drops. Investigate when it rises. Make it as visible as build time or test coverage.

Frequently Asked Questions

How do I convince my manager to invest time in fixing flaky tests?

Frame it in terms of developer time lost. If your team has 20 developers and flaky tests waste 2.5% of productive time, that is half a developer-year spent chasing false failures. Multiply that by your average developer salary. The number is always large enough to justify a two-week investment in systematic remediation.

Should I delete flaky tests instead of fixing them?

Only if the test no longer covers a meaningful scenario. A flaky test that validates a critical payment flow is worth fixing. A flaky test that validates a tooltip animation is worth deleting. The question is always: if this test did not exist, would you write it today?

Is Playwright really better than Selenium for flakiness?

Playwright’s auto-wait mechanism addresses timing-related flakiness at the framework level, which is the single biggest source of flaky tests. Teams migrating from Selenium to Playwright consistently report significant reductions in test flakiness. However, Playwright will not fix data contamination, infrastructure issues, or ordering dependencies. It solves one root cause very well — not all five.

What tools should I use for flaky test detection?

Start with your CI system’s built-in capabilities. GitHub Actions, GitLab CI, and Jenkins all support test result tracking. For dedicated flaky test management, tools like BuildPulse, Currents, and TestDino provide flakiness scoring, quarantine automation, and trend analysis. Playwright’s built-in --repeat-each flag is the simplest starting point for detection.

How long does it take to bring flakiness under control?

Slack brought their flaky test rate from 56.76% down to 3.85% through dedicated remediation. Most teams see significant improvement within 2-4 weeks of focused effort on the quarantine-and-fix cycle. Maintaining low flakiness requires ongoing discipline — the prevention phase — which is why it needs to be a team metric, not a one-time project.

The Bottom Line

Flaky tests are not a minor inconvenience. They are the single biggest threat to trust in your automation suite. When developers stop trusting the pipeline, they stop investigating failures. When they stop investigating failures, real bugs ship to production.

The Detect-Quarantine-Fix-Prevent framework is not revolutionary. It is disciplined. And discipline is exactly what flaky test management requires — not another retry mechanism, not another wait timeout, but a systematic approach that treats test reliability with the same seriousness as application reliability.

Your pipeline should be a source of confidence, not anxiety. Start measuring. Start quarantining. Start fixing. The trust comes back faster than you expect.

References

- BrowserStack — How to Detect and Avoid Playwright Flaky Tests (2026)

- TestDino — Flaky Test Benchmark Report 2026: Rates, Root Causes, and Cost Implications

- TestDino — 9 Best Flaky Test Detection Tools QA Teams Should Use in 2026

- AccelQ — Flaky Tests in 2026: Key Causes, Fixes, and Prevention

- Katalon — The Financial Risk of Flaky Tests in a CI/CD Pipeline

- Ranorex — Flaky Tests in Automation: Strategies for Reliable Automated Testing

- OneUptime — How to Fix Flaky Tests in CI/CD (2026)

- Playwright Official Docs — Test Retries

- TestRail — Flaky Tests in Software Testing: How to Identify, Fix, and Prevent Them

- Edge Delta — Flaky Tests in CI/CD Pipelines Explained

- DEV Community — Reducing Flaky Tests in CI/CD: A Complete Playbook

- Trunk — How to Avoid and Detect Flaky Tests in Playwright