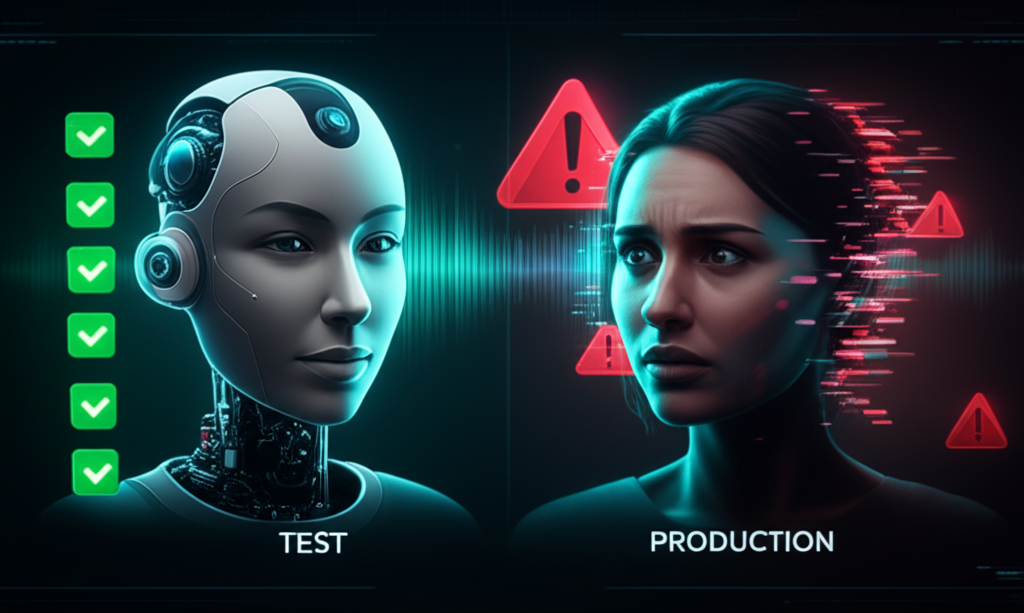

Your Voice Agent Passes Every Test — Until a Real Human Talks to It

The demo was flawless.

The AI banking agent handled balance inquiries, processed transfers, and even navigated a complaint escalation flow without a single hiccup. The stakeholders applauded. The PM scheduled the production rollout.

Two weeks later, support tickets exploded.

“The agent keeps interrupting me.”

“It moved forward without verifying my identity.”

“It confirmed my address before I even said it.”

Every individual turn looked perfect in the logs. The failure was invisible because it lived in the space between turns.

This is the testing gap that’s killing voice AI in production — and almost nobody is talking about it.

Contents

Voice AI Is a Different Beast

Voice agents are blowing up. ElevenLabs just raised one of the largest funding rounds in the space. Vapi is processing millions of calls. Every enterprise I talk to has a voice AI project somewhere in their roadmap.

But here’s what most teams discover too late: voice agents are a step-change in complexity compared to text agents.

Nick Tikhonov, who spent six months building voice agent prototypes for a Fortune 500 company, put it clearly:

“Voice agents are a big step-change in complexity compared to agentic chat. Text agents are relatively simple, because the end-user’s actions coordinate everything.”

With text, the user controls the turn boundary. They type, they hit send, the agent responds.

Voice doesn’t work that way. The orchestration is continuous, real-time, and must manage multiple models simultaneously. At any moment, the system must decide: is the user speaking, or are they listening?

Get that wrong by 200 milliseconds and the conversation feels broken.

The Turn-Detection Problem

Human speech includes:

- Pauses and hesitations

- Filler sounds (“um,” “uh”)

- Background noise

- Non-verbal acknowledgments that shouldn’t interrupt

A pure voice activity detector (VAD) will eagerly decide the turn ended and start talking too early. A slow speaker pauses for two seconds mid-sentence — and the agent jumps in.

This isn’t a bug you can catch with a unit test.

Why Traditional Testing Fails for Voice AI

The Cekura team (YC F24) has been running voice agent simulation for 1.5 years. They’ve identified the core problem:

“The bug isn’t in any single turn — it’s in how turns relate to each other.”

The Turn-by-Turn Blindspot

Most QA approaches evaluate each turn independently:

- Did the agent understand the input? ✅

- Did it respond appropriately? ✅

- Was the response grammatically correct? ✅

All green. Ship it.

But conversational agents fail between turns.

Example from Cekura:

“Imagine a banking agent where the user fails verification in step 1, but the agent hallucinates and proceeds anyway. A turn-based evaluator sees step 3 (address confirmation) and marks it green — the right question was asked. Cekura’s judge sees the full session and flags the session as failed because verification never succeeded.”

The Latency Invisibility Problem

Voice quality is judged subconsciously.

“Small timing errors that would be acceptable in text — a pause here, a delay there — immediately feel wrong in speech.”

Your test suite doesn’t measure:

- End-to-end latency (user stops speaking → first audio plays)

- Barge-in responsiveness (how fast does the agent shut up when interrupted?)

- Turn transition smoothness

These aren’t functional requirements. They’re experiential requirements. And they determine whether users hang up.

The Stochastic Testing Problem

LLMs are non-deterministic. Run the same conversation twice, get different responses.

A CI test that passes “most of the time” is useless.

“Rather than free-form prompts, our evaluators are defined as structured conditional action trees: explicit conditions that trigger specific responses.”

A Voice AI Testing Framework That Actually Works

Based on what’s working at companies actively testing voice agents, here’s a framework you can implement:

Layer 1: Component Testing (Necessary but Insufficient)

Test each component in isolation first:

| Component | What to Test | Tools |

|---|---|---|

| Speech-to-Text | Word error rate, accent handling, noise robustness | Whisper benchmarks, custom datasets |

| LLM | Intent classification, response quality, safety | Standard LLM evals |

| Text-to-Speech | Pronunciation, prosody, latency | A/B listening tests |

| Turn Detection | End-of-turn accuracy, barge-in detection | Labeled conversation datasets |

This catches obvious bugs. It misses conversational failures.

Layer 2: Pipeline Latency Testing

Nick Tikhonov’s latency measurement approach:

“I set up a small test harness on my production server. It ran 360 chat completions across a range of models, cancelling each request immediately after the first token was received.”

Key metrics to track:

| Metric | Target | Why It Matters |

|---|---|---|

| Time to First Token (TTFT) | <500ms | Users perceive >800ms as lag |

| End-to-End Latency | <600ms | Conversation feels responsive |

| Barge-in Latency | <100ms | Agent must shut up FAST |

| WebSocket Warm-up | Pre-connected | 300ms+ savings |

Finding from Nick’s experiments:

“Groq’s llama-3.3-70b achieves ~80ms TTFT — faster than a human blink.”

Model selection is a first-class testing decision.

Layer 3: Full-Session Simulation Testing

This is where Cekura’s approach shines:

1. Scenario Generation from Production Conversations

“Real users find paths no generator anticipates, so we also ingest your production conversations and automatically extract test cases from them. Your coverage evolves as your users do.”

Don’t just imagine edge cases. Mine them from real calls.

2. Persona-Based Testing

Test across diverse user behaviors:

- Slow speakers (long pauses mid-sentence)

- Fast speakers (run-on sentences, no breaks)

- Interrupters (constant barge-ins)

- Confused users (off-script, tangential questions)

- Angry users (emotional, irrational)

“Test how your agent handles interrupts and off-script users.”

3. Full-Session Evaluation Criteria

Evaluate the complete arc, not individual turns:

✅ Session Passed

├── Verification flow completed (all 3 fields captured)

├── No sensitive data disclosed before verification

├── User intent correctly resolved

├── Escalation offered when appropriate

└── Session ended gracefullyLayer 4: Production Monitoring

Testing before deployment isn’t enough. Voice agents degrade in production:

- Model updates change behavior

- Traffic patterns shift

- Edge cases emerge from real usage

Monitor these in production:

- Conversation completion rate

- Average call duration

- Barge-in frequency (too high = agent talking too much)

- Silence duration (too high = agent too slow)

- Escalation rate

- User satisfaction signals (explicit feedback, call-back rates)

The Honest Limitations — Where Voice AI Testing Is Still Hard

Limitation 1: Ground Truth Is Expensive

You need labeled conversations to test against. Someone has to listen to hundreds of calls and annotate:

- Was the turn transition appropriate?

- Did the agent interrupt?

- Was verification properly completed?

This is expensive, slow, and doesn’t scale well.

LLM-based judges help but aren’t perfect. They miss subtle timing issues and can hallucinate their evaluations (yes, really).

Limitation 2: Latency Is Geography-Dependent

Nick Tikhonov’s local testing:

“End-to-end latency averaged around 1.6 seconds. That’s still quite far from Vapi’s ~840ms latency.”

After deploying to Railway EU with co-located services:

“Average latency dropped to ~690ms — more than 2x improvement.”

Your test environment latency ≠ production latency. Test where you deploy.

Limitation 3: The Silence Problem

“Most voice AI failures don’t happen because of hallucinations or mispronunciations. They happen during silence.”

— Cekura Blog

Testing what happens in the milliseconds between words is genuinely hard. Network jitter, buffer management, turn-detection edge cases — these create failures that are difficult to reproduce deterministically.

Limitation 4: No Industry Standard Yet

There’s no “Selenium for voice agents.”

Every team is building custom testing infrastructure. Some are using Twilio for simulation, others are building from scratch. Standards are emerging (Cekura, Deepgram Flux) but nothing is dominant yet.

Your Action Steps — This Week, This Month, This Quarter

This Week

- Measure your current end-to-end latency. TTFT, first audio, barge-in response. You need baselines.

- Record 10 production calls. Listen to them. Where do conversations feel awkward? That’s where testing is missing.

- Create 3 “problem user” test personas. Slow speaker, fast interrupter, confused tangent-taker.

This Month

- Implement session-level evaluation. Don’t just check individual turns — verify the entire flow completed correctly.

- Add latency monitoring to production. Track TTFT, E2E latency, silence duration.

- Build a regression test suite from real failures. Every support ticket about the voice agent = a new test case.

This Quarter

- Evaluate simulation platforms. Cekura, internal tooling, or build your own — pick something.

- Set up geographic testing. Test from the regions your users call from.

- Implement automated alerting. Conversation completion rate drops 5%? That’s a deploy regression.

The Bottom Line

Voice AI testing is in its infancy.

The companies shipping great voice experiences aren’t doing it because they have better models. They’re doing it because they’ve figured out how to test the space between turns.

Nick Tikhonov beat Vapi’s latency by 2x with a custom implementation — not because he had better technology, but because he measured what mattered:

“Voice is an orchestration problem. Once you see the loop clearly, it becomes a solvable engineering problem.”

The same is true for testing.

Once you see that voice agent quality lives in latency, turn transitions, and full-session coherence — not just individual turn accuracy — you can build tests that actually catch production failures.

The teams that figure this out first will own the market.

Everyone else will keep wondering why their demos work perfectly and their production systems drive users crazy.

Want to master AI testing and automation? Check out our software testing courses at TheTestingAcademy — we cover everything from fundamentals to cutting-edge AI testing strategies.

References

- Nick Tikhonov. “How I built a sub-500ms latency voice agent from scratch.” February 9, 2026. https://www.ntik.me/posts/voice-agent

- Cekura. “Launch HN: Cekura (YC F24) – Testing and monitoring for voice and chat AI agents.” Hacker News, 2026. https://news.ycombinator.com/item?id=47232903

- Cekura.ai. “Automated QA for Voice AI and Chat AI Agents.” 2026. https://www.cekura.ai

- Cekura Blog. “The Silence Between Words: Architecting Resilient Voice AI Systems.” February 17, 2026. https://www.cekura.ai/blogs/the-silence-between-words-architecting-resilient-voice-ai-systems

- Cekura Blog. “Conditional Actions: Robust Testing of Chatbots and Voice Agents.” February 25, 2026. https://www.cekura.ai/blogs/conditional-actions-robust-testing-chatbots-voice-agents

- Vapi. Voice AI Agent Platform. https://vapi.ai

- ElevenLabs. Voice AI Platform. https://elevenlabs.io

- Deepgram. “Flux API” — Streaming Transcription with Turn Detection. https://deepgram.com

- Silero VAD. Open-source Voice Activity Detection. https://github.com/snakers4/silero-vad

- Groq. “llama-3.3-70b inference benchmarks.” 2026. https://groq.com