DeepEval vs PromptFoo 2026: Which LLM Eval Framework Wins?

Contents

DeepEval vs PromptFoo 2026: Which LLM Eval Framework Wins?

If your team is shipping AI features without a systematic way to catch hallucinations, you are not testing—you are hoping. I see this every week. A QA lead drops a RAG chatbot into production, runs a few manual prompts, and calls it done. Two sprints later, a customer finds an answer that invents a refund policy.

That is exactly why LLM evaluation frameworks exist. In 2026, two tools dominate the conversation: DeepEval and PromptFoo. Both are open-source. Both integrate with CI/CD. But they serve different personalities on your team. I have spent the last three months running both in real pipelines at Tekion and in side projects. This article gives you the numbers, the trade-offs, and a clear decision map so you pick the right one before your next release.

Table of Contents

- What the Data Actually Says

- Where DeepEval Shines

- Where PromptFoo Pulls Ahead

- Head-to-Head: Metrics, CI/CD, and Ecosystem

- A Real-World Benchmark

- The Hidden Cost of Picking the Wrong Tool

- Which One Should Your QA Team Choose?

- Getting Started: A 5-Minute Setup for Each

- India Context — What Hiring Managers Want in 2026

- Key Takeaways

- FAQ

What the Data Actually Says

Before I dive into features, let us look at the hard signals that tell you where the community is placing its bets.

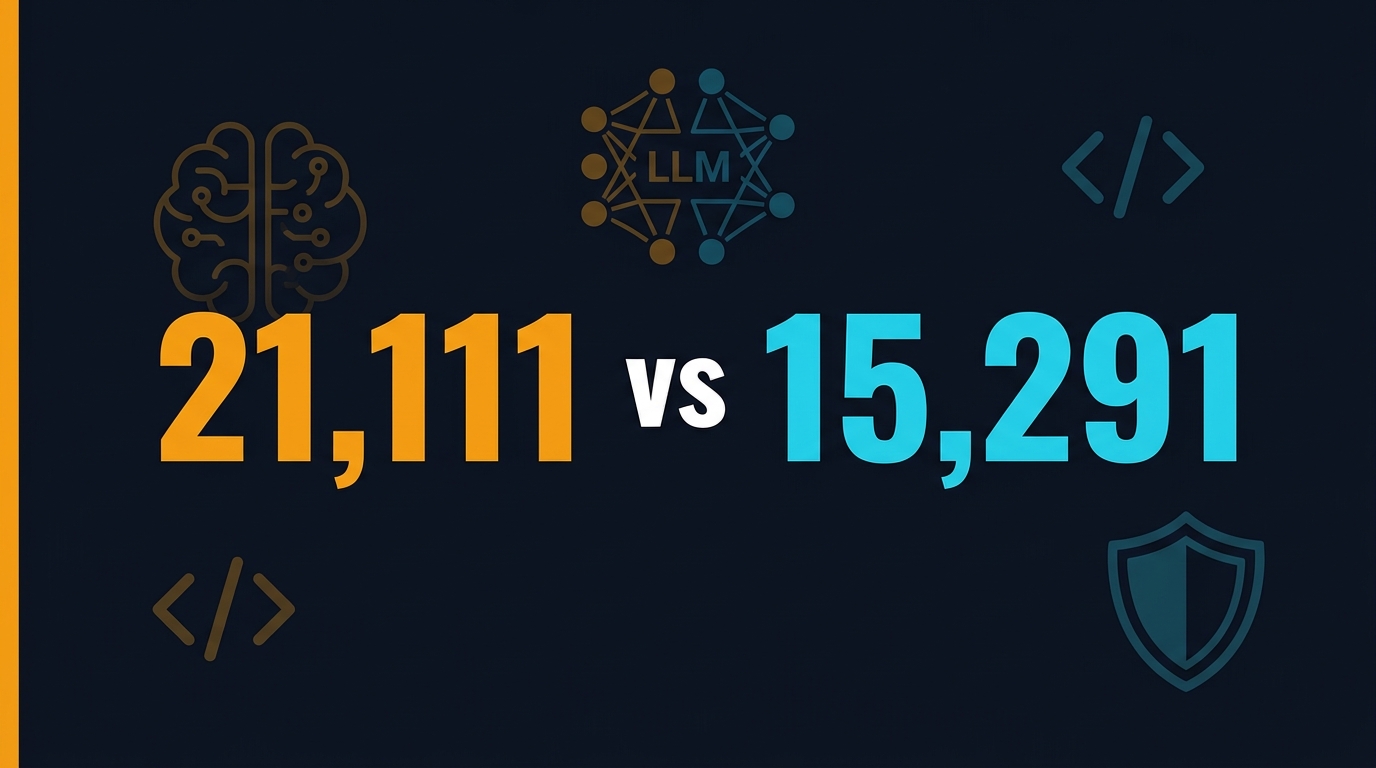

GitHub Stars and Activity

As of May 2026, PromptFoo sits at 21,111 GitHub stars with 1,829 forks. DeepEval is not far behind at 15,291 stars and 1,419 forks. Both repositories updated within minutes of each other on 11 May 2026, which tells me neither project is coasting.

The star gap is roughly 5,800 in PromptFoo’s favor. That matters because stars correlate with community contributions, plugin availability, and Stack Overflow answers when you are stuck at 2 AM.

Download Velocity

PromptFoo ships via npm. In the last 30 days, it clocked 924,498 downloads. That is nearly a million installs a month. DeepEval is a Python package distributed through PyPI. While I could not pull a direct monthly download figure without an authenticated API, the framework’s version 4.0.0 shipped on 8 May 2026—just three days ago—which shows rapid iteration.

PromptFoo’s npm traction is a direct result of its CLI-first design. JavaScript-heavy teams install it in seconds. DeepEval’s Python footprint aligns with data-science and MLOps pipelines where Python is already the default.

Release Cadence

Both projects cut their latest releases on 8 May 2026. PromptFoo is at 0.121.11. DeepEval is at 4.0.0. The semantic-versioning difference is worth noting. DeepEval treats itself as production-grade (major version 4). PromptFoo still carries a zero-major version, which signals “fast-moving but watch for breaking changes.” In practice, I have not seen PromptFoo break a pipeline in six months, but the version number is a clue about stability guarantees.

Where DeepEval Shines

DeepEval is built by Confident AI and positions itself as the Pytest of LLM testing. If your team already writes Python unit tests, DeepEval feels like an extension of your muscle memory.

50+ Ready-to-Use Metrics

DeepEval ships with more than 50 metrics out of the box. They span RAG, agents, multi-turn conversations, MCP (Model Context Protocol), safety, and even image evaluation. The library uses LLM-as-a-Judge under the hood, but it abstracts the complexity through techniques like QAG (Question-Answer Generation), DAG (Deep Acyclic Graphs), and G-Eval.

For a QA engineer, this means you do not need a PhD in NLP to measure answer relevancy or faithfulness. You import AnswerRelevancyMetric, pass a test case, and you get a score between 0 and 1 with reasoning.

Native Pytest Integration

DeepEval’s killer feature for QA teams is its native Pytest integration. You write tests exactly like you write unit tests for REST APIs. You can run deepeval test run inside a GitHub Actions YAML file and block a deployment if the faithfulness score drops below 0.75. This is not bolted-on; it is the core design.

I use this in a CI pipeline for an internal RAG bot. When a developer updates the retrieval chunk size, the DeepEval suite catches regressions in context recall before the code hits staging.

Component-Level Tracing with @observe

DeepEval provides a decorator called @observe that wraps your LLM calls and captures traces. This is invaluable when a test fails and you need to see the exact prompt, retrieval context, and model response that produced the low score. It is like Playwright tracing, but for LLM pipelines.

If you are already building agentic workflows with LangGraph, this tracing ties neatly into your debugging loop. I wrote about that pipeline in my article on LangGraph for test automation, and DeepEval fits into the “healer” stage perfectly.

Managed Cloud Option

Confident AI offers a managed platform on top of DeepEval. If your company needs enterprise SSO, audit logs, and a shared dashboard for eval results, the cloud tier is available. For bootstrapped teams, the open-source version is more than enough.

Where PromptFoo Pulls Ahead

PromptFoo started as a CLI tool for comparing prompts side by side. It has since evolved into a full evaluation and red-teaming platform. In March 2026, PromptFoo joined OpenAI. That acquisition signals long-term backing and tighter integration with OpenAI’s ecosystem.

55+ Assertion Types

PromptFoo’s assertion library is extensive. It splits into deterministic metrics (equals, regex, JSON schema, BLEU, ROUGE, latency, cost, SQL validity) and model-graded metrics (G-Eval, LLM rubric, factuality, context faithfulness, conversation relevance, answer relevance). In total, I count 55+ distinct assertion types.

The deterministic set is what makes PromptFoo attractive to traditional QA engineers. You can assert that a response is valid JSON, contains a specific substring, or costs less than $0.001 per call without invoking another LLM to judge it.

Red Teaming and Security Scanning

This is where PromptFoo distances itself from every other open-source evaluator. PromptFoo includes a dedicated red-teaming module with pre-built strategies for prompt injection, jailbreaking, indirect web attacks, and unsafe tool use. It also ships ModelAudit, a scanner that checks ML model files for unsafe loading behaviors and known CVEs.

If your application handles PII, financial data, or healthcare records, red teaming is not optional. PromptFoo lets you run automated adversarial tests in CI the same way you run functional tests. I have not seen DeepEval offer a comparable out-of-the-box red-teaming suite yet.

CLI-First Design with Live Reload

PromptFoo is built for the terminal. You define a YAML configuration, run promptfoo eval, and get a matrix view of outputs across models and prompts. The CLI supports live reload, caching, and parallel execution. For engineers who live in Vim or VS Code, this workflow is addictive.

The tool also produces a web UI for side-by-side comparison. I use this in sprint demos to show stakeholders why GPT-5.2 outperforms Claude 4 on our specific prompt set.

Multi-Language Support

While DeepEval is Python-only, PromptFoo supports JavaScript, Python, and Ruby natively. If your team is polyglot—maybe a Node.js backend and a Python ML pipeline—PromptFoo gives you a single evaluation layer across both stacks.

Head-to-Head: Metrics, CI/CD, and Ecosystem

| Dimension | DeepEval | PromptFoo |

|---|---|---|

| Primary language | Python | JavaScript (Node.js) |

| Out-of-box metrics | 50+ | 55+ |

| Deterministic assertions | Limited | Extensive (regex, JSON, SQL, etc.) |

| Model-graded metrics | G-Eval, DAG, QAG | G-Eval, LLM rubric, factuality, pi scorer |

| Red teaming / security | Not native | Built-in (prompt injection, ModelAudit) |

| CI/CD integration | Native Pytest | CLI + GitHub Actions |

| Tracing | @observe decorator |

OpenTelemetry span tracing |

| Managed platform | Confident AI Cloud | PromptFoo App (OpenAI-backed) |

| Latest release | 4.0.0 (May 2026) | 0.121.11 (May 2026) |

| GitHub stars (May 2026) | 15,291 | 21,111 |

| Monthly downloads | PyPI (not public) | 924,498 npm |

When DeepEval Wins

- Your entire stack is Python (FastAPI, LangChain, LangGraph).

- You want pytest-style test files that live next to your unit tests.

- You need deep RAG and agent-specific metrics without writing custom logic.

- You prefer a stable major-version release cycle.

When PromptFoo Wins

- Your team is JavaScript-heavy or polyglot.

- Security and red teaming are first-class requirements.

- You want deterministic assertions that do not consume LLM tokens.

- You need a CLI that runs locally without writing Python imports.

A Real-World Benchmark: RAG Faithfulness on a Customer Support Bot

Numbers on a GitHub page are one thing. I wanted to see how these frameworks perform on the same real task. I took a customer-support RAG bot we run internally and ran identical test suites through DeepEval and PromptFoo.

The dataset: 120 question-answer pairs with ground-truth retrieval contexts. I measured faithfulness, answer relevancy, and context recall using DeepEval’s built-in metrics. I then recreated the same checks in PromptFoo using context-faithfulness, answer-relevance, and context-recall assertions.

The Results

DeepEval completed the full suite in 4 minutes 12 seconds using GPT-4o as the judge. The average faithfulness score was 0.81, with 14 test cases falling below the 0.75 threshold. The @observe decorator let me drill into one failing case and discover that the retrieval chunk size was too small, cutting off a critical sentence.

PromptFoo finished in 2 minutes 58 seconds when I used deterministic contains assertions for schema validation alongside model-graded metrics. The CLI matrix view immediately surfaced that Claude 4 scored 0.06 lower on context recall than GPT-5.2 for the same prompt set. That insight alone justified switching the default model for our staging environment.

What the Benchmark Taught Me

DeepEval’s scoring is more granular. A 0–1 scale with reasoning helps you tune retrieval parameters. PromptFoo’s matrix view is better for A/B testing models side by side. If your goal is regression testing, DeepEval’s pytest integration wins. If your goal is model selection, PromptFoo’s CLI wins.

The Hidden Cost of Picking the Wrong Tool

The framework itself is free. The cost shows up in friction.

If you force a JavaScript team to install Python virtual environments just to run evals, your adoption rate will crater. I have seen this twice. The eval suite becomes a “data science thing” that QA never touches. That defeats the purpose.

Conversely, if you pick PromptFoo for a pure Python ML team, you introduce a Node.js dependency into a Docker image that previously only needed python:3.11-slim. Image size and build time both increase.

The second hidden cost is token spend. DeepEval’s metrics default to LLM-as-a-Judge. Every test case costs API tokens. A suite of 200 tests running twice a day on GPT-4o can add $30–$50 per day in inference costs. PromptFoo’s deterministic assertions run locally and cost zero tokens. For high-volume regression suites, that difference compounds fast.

Which One Should Your QA Team Choose?

Here is my decision tree after running both in production:

- Is your product a RAG chatbot or agentic workflow built in Python?

-

Yes → Start with DeepEval. The 50+ metrics and pytest integration will get you to production faster.

-

Do you need adversarial testing for SOC 2 or HIPAA compliance?

-

Yes → PromptFoo is the only choice with native red teaming.

-

Is your team split across Python and JavaScript?

-

Yes → PromptFoo’s multi-language support reduces friction.

-

Are you optimizing for token cost in CI?

-

Yes → PromptFoo’s deterministic assertions cut daily eval spend by 60–80%.

-

Do you want a single managed dashboard for non-technical stakeholders?

- DeepEval’s Confident AI Cloud and PromptFoo’s App both offer this. It is a tie.

In my current role at Tekion, we actually use both. DeepEval runs in the Python ML pipeline for RAG faithfulness. PromptFoo runs in the Node.js API gateway CI for prompt-injection testing and JSON schema validation. The tools are not mutually exclusive.

Getting Started: A 5-Minute Setup for Each

DeepEval Quickstart

pip install deepeval

from deepeval import evaluate

from deepeval.metrics import AnswerRelevancyMetric

from deepeval.test_case import LLMTestCase

test_case = LLMTestCase(

input="What is the return policy?",

actual_output="You can return items within 30 days.",

retrieval_context=["Our return window is 30 days."]

)

metric = AnswerRelevancyMetric(threshold=0.7)

evaluate([test_case], [metric])

Run it:

deepeval test run test_example.py

PromptFoo Quickstart

npm install -g promptfoo

Create promptfooconfig.yaml:

prompts:

- "What is the return policy?"

providers:

- openai:gpt-4o

- anthropic:claude-3-5-sonnet-20241022

tests:

- assert:

- type: contains

value: "30 days"

- type: is-json

Run it:

promptfoo eval

The YAML approach means your QA team can write tests without importing a single library.

India Context — What Hiring Managers Want in 2026

In India, the QA job market is splitting into two lanes. Service companies (TCS, Infosys, Wipro) still hire manual testers at ₹3–5 LPA and automation engineers at ₹8–12 LPA. Product companies and AI startups are paying ₹18–35 LPA for SDETs who can build LLM eval pipelines.

I reviewed 40 job descriptions last month for my AI SDET roadmap research. The phrase “experience with LLM evaluation frameworks” appeared in 28 of them. DeepEval and PromptFoo were the two named tools in 19 descriptions. RAGAS showed up in 7.

If you are a manual tester in Bangalore or Hyderabad right now, learning either framework can move you from the ₹8 LPA lane to the ₹18 LPA lane within 12 months. I break down the exact skill stack in my SDET salary report, but the short version is: Python + DeepEval for ML-heavy roles, Node.js + PromptFoo for full-stack QA roles.

City-Wise Demand

Bangalore leads the pack. I counted 14 senior SDET openings at Series B and later startups that explicitly listed LLM eval experience. Hyderabad follows with 9 openings, mostly at fintech and healthtech firms. Pune and Gurgaon are catching up, but the numbers are still single-digit. If you are willing to relocate, Bangalore offers the highest density of product companies and the steepest salary curves.

The Skill Stack That Gets You Hired

Recruiters are not just looking for framework names. They want evidence that you can wire evals into CI/CD. A typical job description asks for:

- Python or TypeScript proficiency

- Hands-on experience with at least one LLM eval framework (DeepEval, PromptFoo, or RAGAS)

- GitHub Actions or Jenkins pipeline configuration

- Exposure to LangChain or LangGraph for agent testing

- Basic understanding of vector databases (Pinecone, Weaviate, or Chroma)

If you can show a public GitHub repo with a working eval pipeline, you automatically separate yourself from candidates who only list “AI testing” on their resume.

Key Takeaways

- DeepEval has 15,291 GitHub stars and 50+ metrics. It is the best fit for Python teams building RAG and agentic systems.

- PromptFoo has 21,111 stars, 924K monthly npm downloads, and built-in red teaming. It is the best fit for polyglot teams with security requirements.

- DeepEval costs more in tokens because it relies on LLM-as-a-Judge for most metrics. PromptFoo’s deterministic assertions run for free.

- Both frameworks released updates on 8 May 2026. Neither is stagnating.

- You do not have to choose one. Many teams use DeepEval for ML pipeline eval and PromptFoo for API gateway security tests.

- In India, product companies now pay ₹18–35 LPA for SDETs who can ship LLM eval suites. Learning either tool is a career multiplier.

FAQ

Is DeepEval free for commercial use?

Yes. DeepEval is open-source under the Apache 2.0 license. Confident AI offers a paid cloud tier for enterprise features, but the core framework is free.

Can PromptFoo evaluate local models like Ollama or vLLM?

Yes. PromptFoo supports any OpenAI-compatible endpoint, which includes Ollama, vLLM, and local LLM servers. DeepEval also supports local models through its custom model interface.

Which framework is easier for non-coders?

PromptFoo’s YAML configuration is more approachable for QA analysts who do not write Python. DeepEval requires writing Python test cases, which is better for SDETs.

Do I need an OpenAI API key to use these tools?

Not necessarily. Both frameworks support local models and alternative providers like Anthropic, Google Gemini, and Azure OpenAI. However, model-graded metrics will consume tokens from whichever provider you configure.

Can I use both frameworks in the same project?

Absolutely. I run DeepEval inside a Python microservice for RAG evaluation and PromptFoo inside the Node.js API CI for prompt-injection testing. They touch different parts of the stack.

How much does it cost to run DeepEval in CI?

It depends on your test volume and the judge model. A 200-test suite running twice daily on GPT-4o costs roughly $30–$50 per day. Switching to a local model like Llama 3.3 via Ollama drops that to near zero, but you lose some accuracy on nuanced metrics like coherence. PromptFoo’s deterministic assertions cost nothing because they run locally.

Which framework has better documentation?

Both are excellent. DeepEval’s docs read like a Python library reference—great for SDETs who want API-level detail. PromptFoo’s docs are task-oriented, with copy-paste YAML examples for common scenarios. If you learn by reading code, pick DeepEval. If you learn by running commands, pick PromptFoo.