Beyond Bug Counting: Redefining What Quality Means in the Age of AI and Modern Engineering

Amazon, Microsoft, and Apple — companies with multi-billion dollar R&D budgets and the best engineering talent on the planet — ship bugs to production regularly. If they cannot achieve bug-free software, your team cannot either. And no, AI agents will not change this fundamental reality. What matters is not eliminating all bugs but building an intelligent quality strategy that manages risk, measures what matters, and communicates value in terms leadership understands.

I started thinking about this article after seeing a post from a business systems architect that resonated across the QA community: “You cannot release bug-free software. And no, your AI agents cannot either.” The post had modest engagement numbers but sparked an outsized conversation because it touched a nerve. Most QA teams are evaluated on metrics that implicitly assume zero bugs is the goal — and that assumption is doing real damage to how organizations invest in quality.

Contents

The Zero-Bug Myth and Its Consequences

The pursuit of zero bugs leads to three destructive patterns. First, it creates an adversarial relationship between development and QA. If the unstated expectation is that no bugs should reach production, then every production bug is a QA failure. This blame dynamic makes QA teams defensive, developers resentful, and cross-functional collaboration nearly impossible.

Second, it misallocates resources. Chasing the last 2% of bugs costs more than the first 80%. The effort to find and fix edge-case defects that affect one in ten thousand users diverts resources from improving the experience of the other 9,999 users. Quality is not about perfection — it is about fitness for purpose, which includes prioritizing where you invest your testing effort.

Third, it produces meaningless metrics. “Number of bugs found” tells you almost nothing about quality. A high bug count might mean the application is poorly built. It might also mean the QA team is thorough. A low bug count might mean the application is excellent. It might also mean the QA team is not looking hard enough. Without context, the number is noise.

The Five Metrics That Actually Matter

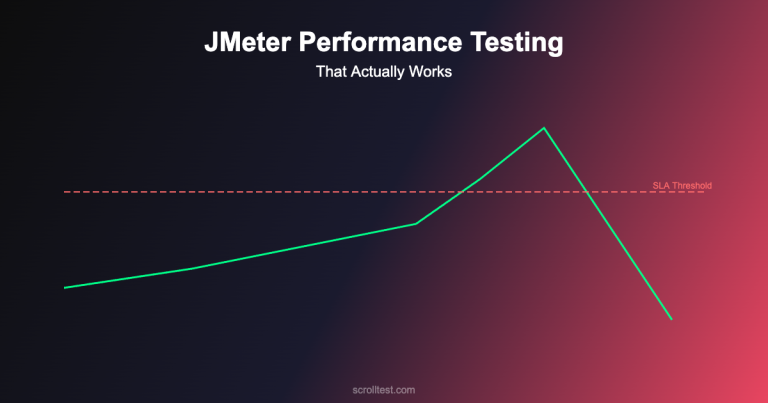

Defect escape rate — the percentage of bugs found in production versus total bugs found — is the single most meaningful quality metric. A decreasing escape rate demonstrates that your testing is catching more issues before they reach users, which is the actual goal of QA. Track it monthly and present the trend.

Mean time to detect (MTTD) measures the average time between when a defect is introduced and when it is discovered. A shorter MTTD means your quality feedback loops are tight — bugs are found close to when they are created, which makes them cheaper to fix and less likely to compound into larger problems.

Customer impact score combines defect severity with the number of users affected. A critical bug that blocks 10% of users is categorically different from a cosmetic issue that 0.1% of users might notice. Prioritizing quality work by customer impact ensures your team focuses on what matters most to the business, not what is easiest to find or fix.

Test coverage effectiveness measures not just what percentage of code is covered by tests, but how effective those tests are at catching real bugs. Code coverage alone is misleading — you can have 90% coverage with tests that validate nothing meaningful. Mutation testing tools like Pitest (Java) and Stryker (JavaScript) inject artificial bugs into your code and check whether your tests catch them, providing a true measure of test effectiveness.

Automation ROI measures the value your automation provides relative to its cost. Calculate the time saved by automated regression testing versus manual execution, subtract the time spent maintaining the automation suite, and you have a concrete number you can present to leadership. If the number is negative — if maintenance costs more than the time saved — that is important information about the health of your automation strategy.

How AI Changes the Quality Equation

AI does not eliminate the need for quality engineering — it introduces new categories of quality concern. LLM-powered features can hallucinate, violate safety boundaries, produce biased outputs, and fail in ways that traditional testing does not account for. The QA team that only validates functional correctness is missing an entire dimension of quality for AI-powered products.

AI tools also change how QA work is done. AI-assisted test generation, intelligent test selection (running only the tests relevant to a code change), and AI-powered defect prediction are genuine productivity multipliers. But they are tools that amplify existing quality strategy — they do not replace it. An AI tool that generates tests for a team with no testing strategy will generate tests that lack strategy. The intelligence has to come from the humans first.

Building a Quality Engineering Culture

The shift from “QA department” to “quality engineering culture” is the most important organizational change a technology team can make. In a QA department model, quality is one team’s responsibility. Developers write code, QA tests it, and bugs bounce back and forth across a handoff boundary. In a quality engineering model, quality is everyone’s responsibility. Developers write tests. Product owners define acceptance criteria that include quality attributes. Operations teams monitor production health. QA engineers become quality advocates who design testing strategies, build test infrastructure, and coach teams on quality practices.

The career evolution for QA professionals maps to this cultural shift: from tester (executes tests) to SDET (builds automation) to quality engineer (designs quality strategies) to quality advocate (influences organizational quality culture). Each level adds scope and influence. Each level requires less execution and more strategic thinking.

The Honest Caveats

The “quality engineering culture” I describe is aspirational for most organizations. Changing organizational culture is a multi-year effort that requires executive sponsorship, patient leadership, and tolerance for the messy transition period where old and new practices coexist. Most teams are not there yet, and that is normal.

The five metrics I recommend require data collection infrastructure that many teams lack. Defect escape rate requires tagging whether a bug was found pre- or post-release. MTTD requires correlating bug discovery dates with the commits that introduced them. Customer impact requires usage analytics. Building this infrastructure is an investment, and teams should start with whichever metric is easiest to implement and expand from there.

I am also aware that advocating for “stop counting bugs” can be misinterpreted as “bugs do not matter.” They do. The point is not to ignore defects but to measure quality in ways that drive better decisions about where to invest limited testing resources.

The Quality Professional’s Role in 2026

Quality professionals who can articulate the business value of their work — in metrics that leadership understands, connected to outcomes that the business cares about — are the ones who will thrive. The QA engineer who reports “we found 47 bugs this sprint” gets a nod. The quality engineer who reports “our defect escape rate dropped from 12% to 4%, which prevented approximately 3,000 customer-impacting incidents based on our traffic patterns” gets a seat at the strategy table. The work is the same. The communication is transformative.

Measuring and communicating quality value — including building dashboards for the five key metrics and presenting QA impact to leadership — is covered in the Quality Leadership track of my AI-Powered Testing Mastery course.