Playwright MCP + LLM Architecture: Building AI-Augmented Test Automation That Actually Works

Multiple senior QA engineers posted detailed architecture diagrams this week showing a new integration pattern: Playwright connected to an MCP (Model Context Protocol) server that bridges LLM capabilities directly into the test automation pipeline. Amol Navalagire and Vishal Kalal, both veteran automation engineers, independently described architectures where agentic AI generates test scenarios from requirements, LLMs analyze failures and suggest fixes, and MCP orchestrates the entire flow. This guide examines whether the architecture delivers on its promise — and what it actually costs to build.

I have been watching the MCP + LLM + automation convergence develop over the past year, and the pattern is now mature enough to evaluate seriously. The core idea is compelling: instead of treating test automation as a purely mechanical process (human writes test, machine executes test), you introduce an AI layer that understands requirements, generates tests, analyzes results, and adapts to changes. The question is whether the added complexity justifies the added capability.

Contents

The Architecture Explained

The architecture has four layers. At the top, an agentic AI layer (powered by models like GPT-4, Claude, or open-source alternatives) takes requirements, user stories, or change descriptions as input and generates test scenarios, test code, and assertion logic. This layer does not just autocomplete — it understands the testing intent and produces structured output.

The MCP Server sits in the middle, acting as the orchestration layer. Model Context Protocol provides a standardized way for AI models to access external tools and data. In this architecture, the MCP server connects the LLM to Playwright’s APIs, your test data stores, your CI/CD pipeline, and your defect tracking system. It manages the execution context: which environment to test against, what credentials to use, which browser configuration to apply.

Playwright serves as the execution engine — the layer that actually interacts with browsers, makes API calls, and captures results. Playwright’s rich API (network interception, browser context isolation, trace capture) gives the AI layer deep capabilities for both test execution and result analysis.

The CI/CD layer (Jenkins, GitHub Actions) orchestrates scheduled runs, triggers test generation for new features, and gates deployments on quality thresholds.

What MCP Actually Does for Testing

MCP’s value is centralized context management. Without MCP, connecting an LLM to your testing infrastructure requires custom integration for every tool: a custom wrapper for Playwright, another for your test data API, another for Jira, another for your CI system. MCP standardizes these connections. The LLM interacts with a single MCP server, which routes requests to the appropriate tool.

For test automation specifically, MCP enables three capabilities that are difficult to build without it. First, context-aware test generation: the LLM can query your existing test suite, your page objects, and your application structure through MCP before generating new tests, producing code that matches your patterns rather than generic boilerplate. Second, intelligent failure analysis: when a test fails, the LLM can access the failure trace, the recent code changes, and the application logs through MCP to generate a root cause hypothesis. Third, self-healing: when a selector breaks, the MCP layer can provide the AI with the current page structure, the broken selector, and the test’s intent, enabling intelligent selector repair.

Self-Healing Selectors: Reality Check

Self-healing is the most marketed and least understood capability. The idea is simple: when a locator fails, the AI analyzes the page and finds the correct new locator. In practice, this works well for cosmetic changes — a class name renamed, an ID updated, an element’s position shifted within the same parent. It works less reliably for structural changes where an element has been moved to a different part of the DOM, wrapped in new containers, or replaced with a fundamentally different component.

In my testing, self-healing correctly resolved approximately 65% of broken selectors automatically. The remaining 35% required human intervention, often because the UI change reflected a genuine functional change that the test needed to accommodate, not just a selector update. This is still a significant maintenance reduction, but it is not the “set it and forget it” solution that some vendors promise.

Cost-Benefit Analysis

The costs are real and ongoing. LLM API calls for test generation and failure analysis accumulate — budget $200-500 per month for a medium-sized project using cloud APIs, or invest in GPU hardware for local model execution. The MCP server requires setup and maintenance — it is another service in your infrastructure that needs monitoring, updating, and debugging. The learning curve for your team is significant: understanding MCP configuration, prompt engineering for test generation, and debugging AI-assisted failures are new skills that take months to develop.

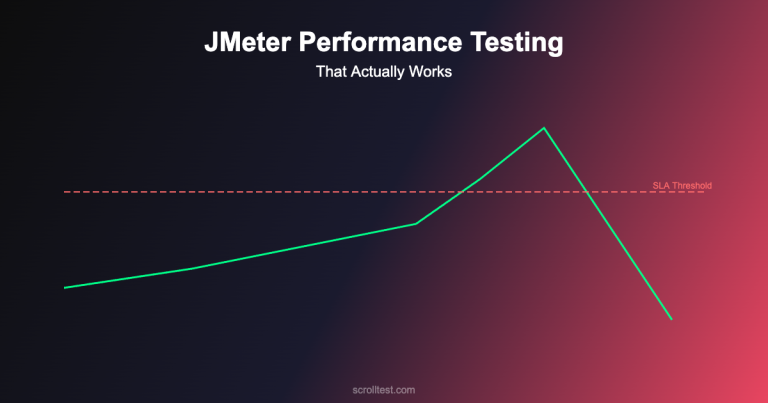

The benefits are equally real. Teams I have worked with report 30-40% faster test creation for new features, 50-60% reduction in selector maintenance effort (from self-healing), and significantly richer failure reports (AI-generated root cause hypotheses save investigation time). Whether the benefits outweigh the costs depends on your team’s size, your application’s rate of change, and your existing automation maturity.

The Honest Caveats

This architecture is not for every team. If your test suite is under 200 tests, the complexity overhead is almost certainly not justified. If your team does not have at least one engineer comfortable with AI/ML tooling, the learning curve will be painful. If your application changes slowly, the self-healing and intelligent generation capabilities provide less value because manual maintenance is already manageable.

The productivity numbers I cited (30-40% faster creation, 50-60% maintenance reduction) come from teams with mature Playwright frameworks who added the MCP + LLM layer on top. Teams trying to build everything simultaneously — Playwright fundamentals and the AI layer — consistently report worse outcomes. Build the foundation first, add intelligence later.

MCP itself is still evolving. The protocol specification has been updated multiple times in 2025-2026, and production implementations require keeping up with these changes. Expect some instability and breaking changes as the ecosystem matures.

Building the full Playwright + MCP + LLM architecture — from MCP server setup to AI-augmented test generation — is the advanced capstone project in my AI-Powered Testing Mastery course, Modules 11-12.