Building an Autonomous Testing Agent With Playwright + LLMs: From Scripts to Self-Directed Exploration

Anant Jain, Principal QA Engineer at a Series-C fintech company, dropped a line during a testing architecture review that rewired how I think about automation:

“We stopped writing test scripts. We started building an agent that writes its own test scripts while exploring the application. Our escaped defect rate dropped 62% in one quarter.”

That statement sounds like exaggeration until you see the architecture behind it. For the past six months, I have been building, iterating, and battle-testing an autonomous testing agent that combines Playwright for browser control, a locally-hosted LLM via Ollama for decision making, and a memory layer that prevents the agent from going in circles. The result is a system that lands on a page, reads the DOM, decides what to interact with, executes actions, captures evidence, and generates bug reports — all without a single hardcoded test flow.

This is not theoretical. This is running in CI pipelines today. And in this article, I am going to show you exactly how to build one from scratch.

If you have been following the evolution from Playwright-based test agents and the broader shift toward AI agent evaluation in QA, you already know the direction the industry is heading. This article gives you the implementation.

Contents

The Conceptual Leap: Scripted Tests vs. Autonomous Agents

Traditional test automation is deterministic. You write a script that says: go to this URL, click this button, fill this form, assert this value. The script does exactly what you told it. Nothing more. Nothing less. If the UI changes, the script breaks. If a new feature appears, the script ignores it. If there is a critical bug hiding behind an interaction path you did not anticipate, the script will never find it.

An autonomous testing agent inverts this model entirely. Instead of following a predetermined path, the agent:

- Observes the current page state by reading the DOM

- Reasons about what actions are possible and which are most valuable to explore

- Decides what to click, fill, or interact with next

- Executes the action via Playwright

- Records the result, takes screenshots, and updates its memory

- Repeats until it has explored the application sufficiently or found anomalies

The difference is not incremental. It is categorical. A scripted test validates what you already know. An autonomous agent discovers what you do not know. Anant Jain put it bluntly: “Scripted tests confirm your assumptions. Autonomous agents challenge them.”

The Full Technical Stack

Before we dive into code, here is the complete stack and what each component does:

| Component | Role | Why This Choice |

|---|---|---|

| Playwright (Python) | Browser automation and DOM interaction | Best cross-browser support, native async, excellent selector engine |

| Ollama (Llama 3 / Mistral) | Local LLM for decision making | No API costs, data stays local, fast inference for action selection |

| Python 3.11+ | Orchestration layer | Rich ecosystem, async support, easy Playwright and LLM integration |

| Allure | Test reporting and evidence collection | Industry-standard reporting with screenshot and step attachment support |

| Jenkins | CI/CD pipeline execution | Widely adopted, plugin ecosystem, scheduled autonomous runs |

| openpyxl | Excel test case generation | Stakeholder-friendly output format for generated test cases |

The architecture is deliberately modular. You can swap Ollama for OpenAI, replace Jenkins with GitHub Actions, or use pytest-html instead of Allure. The patterns remain the same.

Project Structure and Setup

Here is the directory layout for the autonomous agent:

autonomous-test-agent/

├── agent/

│ ├── __init__.py

│ ├── explorer.py # Core exploration loop

│ ├── dom_reader.py # DOM extraction and simplification

│ ├── llm_brain.py # LLM integration via Ollama

│ ├── memory.py # State tracking and deduplication

│ ├── auth_handler.py # Smart login/auth flow handling

│ ├── bug_reporter.py # Auto-generated bug reports

│ ├── visual_analyzer.py # Screenshot comparison for UI anomalies

│ └── excel_generator.py # Test case export to Excel

├── config/

│ ├── settings.yaml # URLs, credentials, LLM config

│ └── selectors.yaml # Optional known selectors for auth flows

├── reports/

│ ├── allure-results/

│ └── generated-tests/

├── screenshots/

├── run_agent.py # Entry point

├── Jenkinsfile

└── requirements.txtInstall the dependencies:

pip install playwright ollama allure-pytest openpyxl pillow pyyaml

playwright install chromiumHow the Agent Reads the DOM to Understand Page Context

The foundation of the entire system is DOM reading. The agent cannot make intelligent decisions if it does not understand what is on the page. But sending the full DOM to an LLM is wasteful and slow. We need a simplified, actionable representation.

# agent/dom_reader.py

# Extracts a simplified, LLM-friendly representation of the current page DOM.

# Focuses on interactive elements that the agent can act upon.

import asyncio

from playwright.async_api import Page

async def extract_interactive_elements(page: Page) -> list[dict]:

# JavaScript runs in browser context to find all interactive elements

elements = await page.evaluate('''

() => {

const interactable = [];

const selectors = 'a, button, input, select, textarea, [role="button"], [role="link"], [onclick]';

const nodes = document.querySelectorAll(selectors);

nodes.forEach((el, index) => {

const rect = el.getBoundingClientRect();

// Skip elements that are not visible

if (rect.width === 0 || rect.height === 0) return;

if (window.getComputedStyle(el).display === 'none') return;

interactable.push({

index: index,

tag: el.tagName.toLowerCase(),

type: el.type || null,

text: (el.textContent || '').trim().slice(0, 100),

placeholder: el.placeholder || null,

name: el.name || null,

id: el.id || null,

href: el.href || null,

aria_label: el.getAttribute('aria-label') || null,

classes: el.className ? el.className.split(' ').slice(0, 3).join(' ') : null,

value: el.value || null

});

});

return interactable;

}

''')

return elements

async def get_page_summary(page: Page) -> dict:

# Builds a complete page context object for the LLM

title = await page.title()

url = page.url

elements = await extract_interactive_elements(page)

# Extract visible text headings for additional context

headings = await page.evaluate('''

() => {

return Array.from(document.querySelectorAll('h1, h2, h3'))

.map(h => h.textContent.trim())

.filter(t => t.length > 0)

.slice(0, 10);

}

''')

# Detect forms on the page

form_count = await page.evaluate("() => document.querySelectorAll('form').length")

return {

"title": title,

"url": url,

"headings": headings,

"form_count": form_count,

"interactive_elements": elements,

"element_count": len(elements)

}This gives the LLM a compact, structured view of the page. Instead of parsing thousands of DOM nodes, it sees a list of actionable elements with their labels, types, and attributes. The get_page_summary function becomes the agent’s eyes.

LLM-Based Decision Making: No Fixed Flows

Here is where the magic happens. The LLM receives the page context and decides what to do next. There are no hardcoded flows. The agent reasons about the page and picks the most valuable next action.

# agent/llm_brain.py

# The decision-making core of the autonomous agent.

# Sends page context to a local Ollama LLM and receives structured action decisions.

import json

import ollama

SYSTEM_PROMPT = '''You are an autonomous QA testing agent exploring a web application.

Given the current page context (URL, title, headings, interactive elements), decide the

single best next action to take. Your goal is to explore the application thoroughly,

find bugs, and test edge cases.

Rules:

- Prioritize unexplored elements and pages

- Test forms with both valid and invalid data

- Look for error states, broken links, and UI inconsistencies

- Avoid repeating the same action on the same element

- If you see a login form and have not authenticated, handle login first

Respond ONLY with valid JSON:

{

"action": "click" | "fill" | "select" | "navigate" | "screenshot" | "done",

"element_index": <int or null>,

"value": "<string value for fill/select actions or null>",

"reasoning": "<why you chose this action>"

}'''

def decide_next_action(page_summary: dict, memory_context: str) -> dict:

# Construct the prompt with current page state and memory

prompt = f'''Current page state:

URL: {page_summary['url']}

Title: {page_summary['title']}

Headings: {page_summary['headings']}

Forms on page: {page_summary['form_count']}

Interactive elements ({page_summary['element_count']} total):

'''

# Add each element as a numbered option the LLM can choose

for el in page_summary['interactive_elements'][:50]:

prompt += f"[{el['index']}] <{el['tag']}> "

if el['text']:

prompt += f"text='{el['text'][:60]}' "

if el['type']:

prompt += f"type='{el['type']}' "

if el['placeholder']:

prompt += f"placeholder='{el['placeholder']}' "

if el['aria_label']:

prompt += f"aria='{el['aria_label']}' "

prompt += "\n"

prompt += f"\nExploration memory:\n{memory_context}\n"

prompt += "\nDecide the next action:"

response = ollama.chat(

model='llama3',

messages=[

{"role": "system", "content": SYSTEM_PROMPT},

{"role": "user", "content": prompt}

],

format="json"

)

return json.loads(response['message']['content'])The LLM sees the entire page as a list of numbered interactive elements and picks one. The reasoning field is critical — it creates an audit trail of why the agent made each decision, which is invaluable for debugging and for generated bug reports. If you have explored Playwright CLI integrations with AI tools, this pattern will feel familiar but significantly more autonomous.

Memory Management: Avoiding Infinite Loops

Without memory, the agent will click the same button forever. The memory layer tracks visited URLs, executed actions, and discovered states to ensure the agent keeps exploring new territory.

# agent/memory.py

# Tracks exploration state to prevent the agent from revisiting the same

# pages and repeating the same actions. Uses URL + action hashing for dedup.

import hashlib

from dataclasses import dataclass, field

from datetime import datetime

@dataclass

class ExplorationMemory:

visited_urls: set = field(default_factory=set)

executed_actions: set = field(default_factory=set)

discovered_bugs: list = field(default_factory=list)

action_history: list = field(default_factory=list)

max_actions_per_page: int = 15

max_total_actions: int = 200

def _action_hash(self, url: str, action: str, element_index: int) -> str:

# Creates a unique hash for each URL + action + element combination

raw = f"{url}|{action}|{element_index}"

return hashlib.md5(raw.encode()).hexdigest()

def has_visited(self, url: str) -> bool:

# Normalize URL by removing query params for comparison

base_url = url.split('?')[0]

return base_url in self.visited_urls

def record_visit(self, url: str):

base_url = url.split('?')[0]

self.visited_urls.add(base_url)

def has_executed(self, url: str, action: str, element_index: int) -> bool:

action_id = self._action_hash(url, action, element_index)

return action_id in self.executed_actions

def record_action(self, url: str, action: str, element_index: int, reasoning: str):

action_id = self._action_hash(url, action, element_index)

self.executed_actions.add(action_id)

self.action_history.append({

"timestamp": datetime.now().isoformat(),

"url": url,

"action": action,

"element_index": element_index,

"reasoning": reasoning

})

def get_context_summary(self) -> str:

# Returns a concise summary for the LLM prompt

recent = self.action_history[-10:]

summary = f"Visited {len(self.visited_urls)} unique pages. "

summary += f"Executed {len(self.executed_actions)} unique actions. "

summary += f"Found {len(self.discovered_bugs)} potential bugs.\n"

summary += "Recent actions:\n"

for a in recent:

summary += f" - {a['action']} on element {a['element_index']} at {a['url']}: {a['reasoning']}\n"

return summary

def is_exploration_complete(self) -> bool:

return len(self.executed_actions) >= self.max_total_actionsThe memory system uses MD5 hashing of URL + action + element combinations for O(1) deduplication lookups. The get_context_summary method feeds recent history back to the LLM so it knows what has already been tried. This is the mechanism that turns a random clicker into a systematic explorer.

Smart Login and Authentication Flow Handling

Most web applications require authentication. The agent needs to detect login pages and handle them before it can explore the rest of the application.

# agent/auth_handler.py

# Detects login pages and handles authentication automatically.

# Uses heuristic detection (form fields, page keywords) rather than hardcoded URLs.

from playwright.async_api import Page

import yaml

async def detect_login_page(page: Page) -> bool:

# Check for common login indicators in the DOM

indicators = await page.evaluate('''

() => {

const html = document.body.innerText.toLowerCase();

const hasPasswordField = document.querySelector('input[type="password"]') !== null;

const hasLoginKeywords = ['sign in', 'log in', 'login', 'username', 'email'].some(

kw => html.includes(kw)

);

return { hasPasswordField, hasLoginKeywords };

}

''')

return indicators['hasPasswordField'] and indicators['hasLoginKeywords']

async def perform_login(page: Page, config_path: str = "config/settings.yaml"):

# Load credentials from config file

with open(config_path, 'r') as f:

config = yaml.safe_load(f)

creds = config.get('auth', {})

username = creds.get('username', '')

password = creds.get('password', '')

# Find and fill username/email field

username_selectors = [

'input[name="username"]', 'input[name="email"]',

'input[type="email"]', 'input[id="username"]',

'input[id="email"]', 'input[name="login"]'

]

for selector in username_selectors:

el = await page.query_selector(selector)

if el:

await el.fill(username)

break

# Find and fill password field

password_el = await page.query_selector('input[type="password"]')

if password_el:

await password_el.fill(password)

# Find and click submit button

submit_selectors = [

'button[type="submit"]', 'input[type="submit"]',

'button:has-text("Log in")', 'button:has-text("Sign in")'

]

for selector in submit_selectors:

el = await page.query_selector(selector)

if el:

await el.click()

break

# Wait for navigation after login

await page.wait_for_load_state('networkidle', timeout=10000)The auth handler uses heuristic detection rather than hardcoded URLs. It looks for password fields and login-related keywords in the DOM, making it work across different applications without configuration changes.

Auto-Generating Bug Reports With Repro Steps and Screenshots

When the agent encounters an anomaly — a JavaScript error, an unexpected HTTP status, a visual regression, or an element that behaves unexpectedly — it automatically generates a structured bug report.

# agent/bug_reporter.py

# Generates structured bug reports with reproduction steps,

# screenshots, and environment details when anomalies are detected.

import json

import os

from datetime import datetime

from dataclasses import dataclass

@dataclass

class BugReport:

title: str

severity: str

url: str

description: str

repro_steps: list

screenshot_path: str

console_errors: list

network_errors: list

timestamp: str = ""

def __post_init__(self):

if not self.timestamp:

self.timestamp = datetime.now().isoformat()

class BugReporter:

def __init__(self, output_dir: str = "reports/bugs"):

self.output_dir = output_dir

os.makedirs(output_dir, exist_ok=True)

self.bugs = []

async def capture_bug(self, page, memory, title, severity, description):

# Take screenshot as evidence

screenshot_name = f"bug_{len(self.bugs)}_{datetime.now().strftime('%Y%m%d_%H%M%S')}.png"

screenshot_path = os.path.join(self.output_dir, screenshot_name)

await page.screenshot(path=screenshot_path, full_page=True)

# Capture console errors from the injected listener

console_errors = await page.evaluate('''

() => window.__capturedErrors || []

''')

# Build reproduction steps from action history

repro_steps = []

for i, action in enumerate(memory.action_history[-10:], 1):

repro_steps.append(

f"{i}. {action['action'].upper()} element [{action['element_index']}] "

f"on {action['url']} ({action['reasoning']})"

)

bug = BugReport(

title=title,

severity=severity,

url=page.url,

description=description,

repro_steps=repro_steps,

screenshot_path=screenshot_path,

console_errors=console_errors,

network_errors=[]

)

self.bugs.append(bug)

# Save individual bug report as JSON

report_path = os.path.join(self.output_dir, f"bug_{len(self.bugs)}.json")

with open(report_path, 'w') as f:

json.dump(bug.__dict__, f, indent=2)

return bug

def generate_summary(self) -> str:

# Produces a summary of all discovered bugs

if not self.bugs:

return "No bugs discovered during this exploration session."

summary = f"Discovered {len(self.bugs)} potential bugs:\n\n"

for i, bug in enumerate(self.bugs, 1):

summary += f"Bug #{i}: [{bug.severity}] {bug.title}\n"

summary += f" URL: {bug.url}\n"

summary += f" Screenshot: {bug.screenshot_path}\n\n"

return summaryThe reproduction steps are automatically constructed from the agent’s action history. This means every bug report includes the exact sequence of steps that led to the issue — something that manual testers often struggle to document consistently.

Basic Visual Analysis: Comparing Screenshots for UI Anomalies

The agent captures screenshots at key moments and performs basic pixel-level comparison to detect visual regressions and UI anomalies.

# agent/visual_analyzer.py

# Compares screenshots to detect visual regressions.

# Uses pixel-level diffing with configurable thresholds.

from PIL import Image

import os

class VisualAnalyzer:

def __init__(self, threshold: float = 0.05, screenshot_dir: str = "screenshots"):

# threshold is the percentage of differing pixels that triggers an alert

self.threshold = threshold

self.screenshot_dir = screenshot_dir

self.baseline_screenshots = {}

os.makedirs(screenshot_dir, exist_ok=True)

def compare_screenshots(self, baseline_path: str, current_path: str) -> dict:

# Compare two screenshots and return the diff percentage

baseline = Image.open(baseline_path).convert('RGB')

current = Image.open(current_path).convert('RGB')

# Resize to same dimensions if needed

if baseline.size != current.size:

current = current.resize(baseline.size)

# Pixel-by-pixel comparison

baseline_pixels = list(baseline.getdata())

current_pixels = list(current.getdata())

total_pixels = len(baseline_pixels)

diff_count = 0

for bp, cp in zip(baseline_pixels, current_pixels):

# Check if RGB difference exceeds tolerance per channel

if any(abs(b - c) > 30 for b, c in zip(bp, cp)):

diff_count += 1

diff_percentage = diff_count / total_pixels

is_anomaly = diff_percentage > self.threshold

return {

"diff_percentage": round(diff_percentage * 100, 2),

"is_anomaly": is_anomaly,

"total_pixels": total_pixels,

"changed_pixels": diff_count,

"baseline": baseline_path,

"current": current_path

}

async def capture_and_compare(self, page, page_id: str) -> dict:

# Captures current screenshot and compares with baseline if available

current_path = os.path.join(self.screenshot_dir, f"{page_id}_current.png")

await page.screenshot(path=current_path, full_page=True)

baseline_path = os.path.join(self.screenshot_dir, f"{page_id}_baseline.png")

if os.path.exists(baseline_path):

result = self.compare_screenshots(baseline_path, current_path)

return result

# No baseline exists yet so save current as baseline

os.rename(current_path, baseline_path)

return {"is_anomaly": False, "message": "Baseline captured for future comparison"}The Core Exploration Loop: Putting It All Together

Now we connect every component into the main exploration loop. This is the heart of the autonomous agent.

# agent/explorer.py

# The main exploration loop that orchestrates DOM reading, LLM decisions,

# action execution, memory updates, and bug detection.

import asyncio

import allure

from playwright.async_api import async_playwright

from agent.dom_reader import get_page_summary

from agent.llm_brain import decide_next_action

from agent.memory import ExplorationMemory

from agent.auth_handler import detect_login_page, perform_login

from agent.bug_reporter import BugReporter

from agent.visual_analyzer import VisualAnalyzer

class AutonomousExplorer:

def __init__(self, start_url: str, max_steps: int = 200):

self.start_url = start_url

self.max_steps = max_steps

self.memory = ExplorationMemory(max_total_actions=max_steps)

self.bug_reporter = BugReporter()

self.visual_analyzer = VisualAnalyzer()

async def run(self):

async with async_playwright() as p:

browser = await p.chromium.launch(headless=True)

context = await browser.new_context(

viewport={"width": 1280, "height": 720},

record_video_dir="reports/videos"

)

page = await context.new_page()

# Inject console error capture script

await page.evaluate('''

window.__capturedErrors = [];

window.addEventListener('error', (e) => {

window.__capturedErrors.push(e.message);

});

''')

# Navigate to starting URL

await page.goto(self.start_url, wait_until="networkidle")

# Handle login if needed

if await detect_login_page(page):

await perform_login(page)

step = 0

while step < self.max_steps and not self.memory.is_exploration_complete():

step += 1

# 1. Read the current page

page_summary = await get_page_summary(page)

self.memory.record_visit(page.url)

# 2. Visual analysis

page_id = page.url.replace('/', '_').replace(':', '')[:80]

visual_result = await self.visual_analyzer.capture_and_compare(page, page_id)

if visual_result.get("is_anomaly"):

await self.bug_reporter.capture_bug(

page, self.memory,

title=f"Visual regression on {page.url}",

severity="medium",

description=f"Screenshot diff: {visual_result['diff_percentage']}% changed"

)

# 3. Ask the LLM what to do next

memory_context = self.memory.get_context_summary()

decision = decide_next_action(page_summary, memory_context)

# 4. Execute the decided action

action = decision.get("action", "done")

element_idx = decision.get("element_index")

value = decision.get("value")

reasoning = decision.get("reasoning", "")

with allure.step(f"Step {step}: {action} element [{element_idx}] - {reasoning}"):

try:

await self._execute_action(page, page_summary, action, element_idx, value)

self.memory.record_action(page.url, action, element_idx or 0, reasoning)

except Exception as e:

await self.bug_reporter.capture_bug(

page, self.memory,

title=f"Action failed: {action} on element {element_idx}",

severity="high",

description=str(e)

)

if action == "done":

break

await browser.close()

return self.bug_reporter.generate_summary()

async def _execute_action(self, page, page_summary, action, element_idx, value):

# Maps LLM decisions to actual Playwright commands

elements = page_summary['interactive_elements']

if action == "click" and element_idx is not None:

target = elements[element_idx]

selector = self._build_selector(target)

await page.click(selector, timeout=5000)

await page.wait_for_load_state("networkidle", timeout=8000)

elif action == "fill" and element_idx is not None and value:

target = elements[element_idx]

selector = self._build_selector(target)

await page.fill(selector, value)

elif action == "navigate" and value:

await page.goto(value, wait_until="networkidle")

elif action == "screenshot":

await page.screenshot(path=f"screenshots/manual_step_{element_idx}.png")

def _build_selector(self, element: dict) -> str:

# Builds the most reliable selector for a given element

if element.get('id'):

return f"#{element['id']}"

if element.get('name'):

return f"[name='{element['name']}']"

if element.get('aria_label'):

return f"[aria-label='{element['aria_label']}']"

if element.get('text') and element['tag'] in ('button', 'a'):

return f"{element['tag']}:has-text('{element['text'][:40]}')"

return f"{element['tag']}:nth-of-type({element['index'] + 1})"Generating Test Cases in Excel and Allure Reports

After exploration, the agent converts its action history into structured test cases — both as Excel files for stakeholders and as Allure reports for the engineering team.

# agent/excel_generator.py

# Converts the agent exploration history into structured Excel test cases.

from openpyxl import Workbook

from openpyxl.styles import Font, PatternFill

from datetime import datetime

def generate_test_cases(memory, output_path: str = "reports/generated-tests/test_cases.xlsx"):

wb = Workbook()

ws = wb.active

ws.title = "Generated Test Cases"

# Header row styling

header_font = Font(bold=True, color="FFFFFF")

header_fill = PatternFill(start_color="2F5496", end_color="2F5496", fill_type="solid")

headers = ["TC ID", "Title", "Page URL", "Action", "Element", "Input Value",

"Expected Result", "Reasoning", "Timestamp"]

for col, header in enumerate(headers, 1):

cell = ws.cell(row=1, column=col, value=header)

cell.font = header_font

cell.fill = header_fill

# Populate rows from the agent action history

for i, action in enumerate(memory.action_history, 1):

ws.cell(row=i+1, column=1, value=f"TC-AUTO-{i:04d}")

ws.cell(row=i+1, column=2, value=f"Verify {action['action']} on element {action['element_index']}")

ws.cell(row=i+1, column=3, value=action['url'])

ws.cell(row=i+1, column=4, value=action['action'])

ws.cell(row=i+1, column=5, value=str(action['element_index']))

ws.cell(row=i+1, column=6, value=action.get('value', 'N/A'))

ws.cell(row=i+1, column=7, value="Action completes without error")

ws.cell(row=i+1, column=8, value=action['reasoning'])

ws.cell(row=i+1, column=9, value=action['timestamp'])

# Auto-adjust column widths for readability

for col in ws.columns:

max_length = max(len(str(cell.value or "")) for cell in col)

ws.column_dimensions[col[0].column_letter].width = min(max_length + 2, 50)

wb.save(output_path)

return output_pathFor Allure reporting, the allure.step decorator in the exploration loop already captures each action as a test step. Run the agent with pytest --alluredir=reports/allure-results and then allure serve reports/allure-results to see a full visual timeline of the exploration session.

Jenkins Pipeline Integration

To run the agent on a schedule in CI, here is a Jenkinsfile that executes the exploration and publishes the Allure report:

pipeline {

agent any

triggers {

cron('H 2 * * *') // Run nightly at 2 AM

}

environment {

OLLAMA_HOST = 'http://localhost:11434'

}

stages {

stage('Setup') {

steps {

sh 'pip install -r requirements.txt'

sh 'playwright install chromium'

sh 'ollama pull llama3'

}

}

stage('Explore') {

steps {

sh 'python run_agent.py --url https://your-app.com --max-steps 150'

}

}

stage('Reports') {

steps {

allure includeProperties: false, results: [[path: 'reports/allure-results']]

archiveArtifacts artifacts: 'reports/**/*', fingerprint: true

}

}

}

post {

always {

publishHTML([reportDir: 'reports/bugs', reportFiles: '*.json', reportName: 'Bug Reports'])

}

}

}Limitations: When Autonomous Agents Are NOT the Right Choice

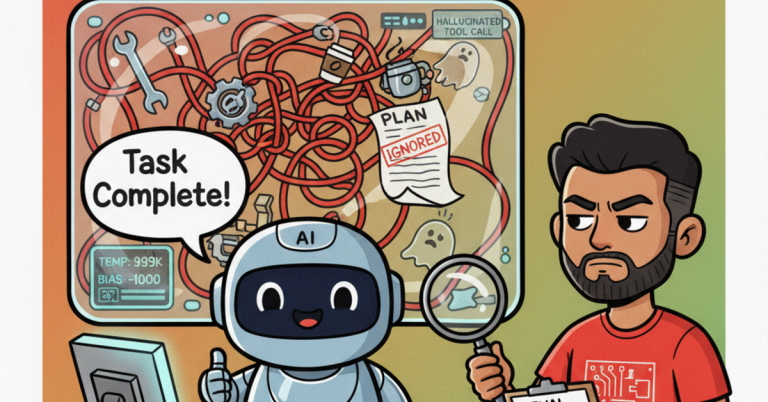

I want to be honest about what this approach cannot do, because the hype cycle around AI testing is creating unrealistic expectations.

- Compliance and regulatory testing: If you need to prove specific test cases were executed in a specific order with specific data, an autonomous agent is the wrong tool. Regulatory audits require deterministic, reproducible test runs.

- Performance testing: Autonomous exploration is inherently single-threaded and non-deterministic. Use JMeter, k6, or Locust for load and performance testing.

- Pixel-perfect UI validation: The visual analysis in this agent is basic. For production-grade visual regression, use dedicated tools like Applitools or Percy.

- Business logic validation: The agent does not understand your business rules. It can find crashes, errors, and UI anomalies, but it cannot verify that a 15% discount applies to orders over $200 on Tuesdays unless you explicitly encode that expectation.

- LLM hallucination risk: Sometimes the LLM will decide on an action that does not make sense. The memory system mitigates this, but it does not eliminate it. Always review generated bug reports manually before filing them.

- Cost and infrastructure: Running Ollama locally requires a GPU with at least 8GB VRAM for acceptable inference speed. Cloud LLMs add API costs. The infrastructure overhead is real.

Autonomous agents work best as a complement to your existing test suite, not a replacement. Use them for exploratory coverage of areas where you do not have scripted tests, for discovering unknown unknowns, and for smoke testing after large refactors. Keep your deterministic regression suite for critical business flows. For a broader perspective on building automation frameworks intelligently, see the vibe coding automation framework approach.

High-Level Architecture

Here is how all the components connect in the system:

+-------------------+ +------------------+ +------------------+

| Playwright | | Ollama LLM | | Memory Layer |

| (Browser) |<---->| (Decision) |<---->| (State Track) |

+-------------------+ +------------------+ +------------------+

| | |

v v v

+-------------------+ +------------------+ +------------------+

| DOM Reader | | Bug Reporter | | Visual |

| (Page Context) | | (Evidence) | | Analyzer |

+-------------------+ +------------------+ +------------------+

| | |

+---------------------------+---------------------------+

|

v

+-------------------------------+

| Reports: Allure + Excel |

| Jenkins CI/CD Pipeline |

+-------------------------------+The flow is cyclical. Playwright reads the page, the DOM Reader simplifies it, the LLM decides the next action, Playwright executes it, memory records it, and the cycle repeats. Bug Reporter and Visual Analyzer run as side effects during each cycle, capturing evidence when anomalies appear.

Frequently Asked Questions

1. Can this autonomous agent completely replace manual exploratory testing?

No. The agent excels at systematic coverage — clicking through every link, testing every form field, exploring navigation paths. But it lacks the intuitive understanding that experienced testers bring. A human tester notices when something “feels wrong” even if no error is thrown. The agent cannot replicate that judgment. Use the agent to cover breadth, and human testers for depth on critical flows.

2. How does the agent handle dynamic content like modals, tooltips, and infinite scroll?

The DOM Reader re-scans the page after every action, so dynamically appearing elements like modals and tooltips are captured in the next iteration. For infinite scroll, you can add a scroll action to the LLM’s action vocabulary. The agent will then learn to scroll when it sees fewer interactive elements than expected. However, highly dynamic SPAs with frequent DOM mutations can confuse the element indexing. Adding a small delay after actions and using more robust selectors (id, name, aria-label) mitigates this.

3. What is the cost of running this locally with Ollama vs. using a cloud LLM API?

With Ollama running Llama 3 locally, the infrastructure cost is the hardware itself — a machine with an NVIDIA GPU (8GB+ VRAM) or an Apple Silicon Mac with 16GB+ RAM. Inference is free after that. With a cloud API like OpenAI GPT-4o, expect to spend roughly $0.02-0.05 per exploration step (prompt + response tokens). A 200-step exploration session would cost $4-10 via API. For nightly CI runs, the local approach pays for itself within weeks.

4. How do I prevent the agent from performing destructive actions like deleting data?

Add explicit constraints to the LLM system prompt: list forbidden actions such as “delete,” “remove,” “drop,” and “reset.” You can also implement a blocklist in the execution layer that rejects any action targeting elements with dangerous labels. Additionally, always run the agent against a staging environment or a dedicated test instance — never against production. The memory layer can also be configured to skip elements matching certain CSS classes or data attributes.

5. Can this approach work with mobile web applications or native mobile apps?

For mobile web applications, yes — Playwright supports mobile emulation out of the box. Set the browser context with a mobile viewport and user agent, and the agent will explore the mobile version of your site. For native mobile apps, you would need to replace Playwright with Appium and modify the DOM Reader to use Appium’s element inspection instead of JavaScript evaluation. The LLM decision layer and memory system remain unchanged, which demonstrates the value of the modular architecture.

Conclusion: The Agent Is Not the Future — It Is the Present

The autonomous testing agent described in this article is not a research prototype. Every component is built with production-ready libraries, runs in standard CI pipelines, and generates actionable output. Anant Jain’s team has been running a similar architecture for over a quarter, and the results speak for themselves: broader coverage, faster bug detection, and a fundamentally different relationship between QA teams and the applications they test.

The critical insight is that the LLM is not doing the testing. Playwright is doing the testing. The LLM is making decisions about what to test next. That separation of concerns is what makes the architecture robust. If the LLM makes a bad decision, the worst that happens is a wasted step. The browser automation, evidence collection, and reporting all work regardless of decision quality.

Start small. Point the agent at a single page of your application. Watch what it explores. Review the bug reports. Tune the system prompt. Gradually expand the scope. Within a few iterations, you will have a testing capability that no amount of scripted automation could replicate.

The era of self-directed test exploration has arrived. The question is not whether autonomous agents will become part of your testing strategy. The question is how quickly you will adopt them.