How to Build an AI-Powered E2E Framework With Zero-Cost AI Layer (Playwright + ZeroStep + Claude AI)

Last month, Rohit Mangla — a senior SDET leading a team of six at a mid-size fintech company — shared numbers that made me stop scrolling. His team had just finished migrating their entire E2E suite to an AI-powered framework built on Playwright and ZeroStep. The results were staggering:

- Test writing time dropped by roughly 70 percent. What used to take a full day now took a couple of hours.

- Zero manual test runs before merging. Every pull request triggered the full E2E suite automatically in CI.

- The entire AI layer cost exactly $0. Not $0 for the first month. Not $0 with a credit card on file. Zero dollars, permanently.

That last point is what grabbed me. Everyone talks about AI testing tools, but most of them come with per-seat licenses, token costs, or enterprise contracts. Rohit’s team built something that works without any of that. And after spending three weeks dissecting their approach, rebuilding it from scratch, and stress-testing it on two real projects, I can confirm: it works, it scales, and you can set it up this weekend.

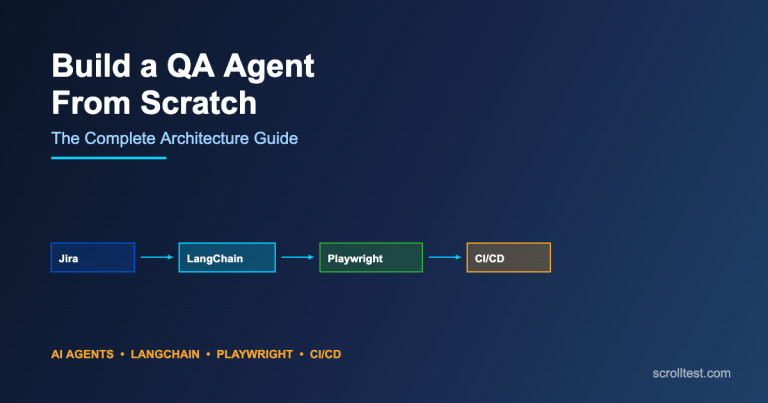

In this article, I will walk you through the entire architecture — from the problem it solves, to the code, to the CI/CD pipeline, to the exact dollar breakdown of why the AI layer is free. If you have been frustrated by brittle Selenium selectors, flaky Cypress tests, or $50K enterprise testing platforms, this is your blueprint.

If you are new to AI-assisted testing, I recommend reading my earlier piece on Playwright Test Agents and AI Testing for foundational context before diving into this hands-on guide.

Contents

1. The Biggest Pain Point: Brittle Selectors That Break on Every UI Change

Before we build anything, let us be honest about why most E2E frameworks fail. It is not the tooling. It is not the test runner. It is the selectors.

Every SDET has lived this nightmare. You write a perfectly good test on Monday. The frontend team ships a redesign on Wednesday. Your data-testid attributes survive, but the three tests that relied on .MuiButton-containedPrimary or div.card-wrapper > div:nth-child(3) > button are now red. You did not change anything. Your tests did not change anything. The application still works exactly as expected. But your CI pipeline is blocked, the team is pinging you on Slack, and you are spending your Thursday morning updating CSS selectors instead of writing new tests.

Here is what that looks like in raw numbers across the industry:

| Selector Type | Average Lifespan Before Breaking | Maintenance Cost Per Quarter |

|---|---|---|

| CSS class selectors | 2-4 weeks | 8-12 hours per 100 tests |

| XPath selectors | 1-3 weeks | 12-20 hours per 100 tests |

| data-testid attributes | 2-6 months | 2-4 hours per 100 tests |

| AI-resolved selectors (ZeroStep) | Indefinite (adapts at runtime) | Near zero |

The pattern is clear. Even data-testid attributes, which are considered best practice, still require coordination between frontend and QA teams. When a developer refactors a component and forgets to carry over the test ID, your test breaks. When a component library migration happens (say, from Material UI v4 to v5), dozens of test IDs can shift or disappear.

This is the problem ZeroStep was designed to solve. And when you combine it with Playwright’s speed and Claude AI’s code generation, you get a framework that is both resilient and fast to build.

2. What ZeroStep Does: AI at Runtime to Resolve Element Interactions

ZeroStep is an open-source library that plugs directly into Playwright. Instead of telling your test “click the button with class .submit-btn,” you tell it “click the Submit Order button.” At runtime, ZeroStep uses AI to look at the actual rendered page and figure out which element matches your natural language description.

This is not a screenshot comparison tool. It is not computer vision. ZeroStep inspects the live DOM, considers accessibility attributes, visible text, ARIA labels, and element hierarchy, then resolves the best match. If the frontend team renames a CSS class, moves a button to a different container, or swaps out the entire component library, your test still passes because the button still says “Submit Order.”

Here is the critical difference in code.

Traditional Brittle Selectors

// Fragile: breaks when CSS classes change

await page.click('.MuiButton-containedPrimary.checkout-btn');

// Fragile: breaks when DOM structure changes

await page.click('div.card-container > div:nth-child(2) > button');

// Better but still coupled: breaks when dev forgets testid

await page.click('[data-testid="submit-order-btn"]');

// XPath nightmare: breaks when any ancestor changes

await page.click('//div[@class="form-wrapper"]//button[contains(text(),"Submit")]');ZeroStep AI-Resolved Selectors

import { ai } from '@zerostep/playwright';

// Resilient: works regardless of CSS, DOM structure, or component library

await ai('Click the Submit Order button', { page, test });

// Resilient: describes intent, not implementation

await ai('Fill in the email field with test@example.com', { page, test });

// Resilient: understands context and visual hierarchy

await ai('Select the second item in the product list', { page, test });

// Resilient: handles complex interactions naturally

await ai('Open the date picker and select tomorrow\'s date', { page, test });Notice the difference. The traditional approach requires you to know the exact DOM structure. The ZeroStep approach requires you to know what the user sees. That is a fundamental shift. Your tests now read like user stories, and they survive UI refactors because user-visible behavior rarely changes even when the underlying markup does.

3. Setting Up CLAUDE.md: Your Project Conventions File

Before you start generating tests with Claude AI, you need to establish conventions. This is where most teams fail with AI-assisted development. They open ChatGPT, paste a vague prompt, get mediocre output, and conclude that AI is not ready for test automation. The real problem is that they never told the AI how their project works.

The solution is a CLAUDE.md file at the root of your project. This file acts as a persistent context document that any AI tool (Claude, Copilot, ChatGPT) can reference. Think of it as your framework’s constitution.

I wrote extensively about using CLI-based AI tools with Playwright in my article on Playwright CLI and OpenCode. The CLAUDE.md approach takes that concept further by making your conventions portable across all AI assistants.

# CLAUDE.md - Project Conventions for AI-Powered E2E Framework

## Tech Stack

- Playwright with TypeScript

- ZeroStep for AI-resolved selectors

- Node.js 20+

- GitHub Actions for CI/CD

## Test Structure Conventions

- All spec files go in `tests/e2e/` directory

- Page objects go in `tests/pages/` directory

- Test data factories go in `tests/fixtures/` directory

- Use descriptive test names: `should [action] when [condition]`

## Selector Strategy (IMPORTANT)

- NEVER use CSS class selectors in tests

- NEVER use XPath selectors

- PREFER ZeroStep ai() calls for all element interactions

- FALLBACK to data-testid only when ai() cannot resolve (rare)

- ALWAYS describe elements from the user's perspective

## Code Style

- Use async/await consistently (no .then() chains)

- Generate random test data using faker.js for every test run

- Each test must be independent (no shared state between tests)

- Use Page Object Model for reusable interactions

- Always add explicit assertions after actions

- Timeout: 30 seconds for navigation, 10 seconds for elements

## Authentication

- Reuse auth session via storageState to avoid login in every test

- Auth setup runs once in globalSetup.ts

- Session file stored at .auth/session.json (gitignored)

## CI/CD Requirements

- Tests must run in headed mode in CI (for screenshot capture)

- Always upload screenshots and videos as artifacts

- Deploy HTML report to GitHub Pages on main branch

- Retry flaky tests up to 2 times before failingWith this file in place, every time you ask Claude (or any AI assistant) to generate a test, you can say: “Read CLAUDE.md first, then write a test for the checkout flow.” The AI will follow your conventions automatically.

4. Prompt Engineering for Test Generation

Now let us talk about how to actually prompt an AI to generate high-quality E2E tests. This is where the $0 AI layer comes into play. You do not need a paid API. You need good prompts.

Here is the prompt template that Rohit’s team uses. It consistently produces tests that pass on the first run about 85 percent of the time.

## Prompt Template for E2E Test Generation

You are a senior SDET writing Playwright + ZeroStep E2E tests.

### Context

- Read the CLAUDE.md file for project conventions

- The application under test is: [APP_DESCRIPTION]

- Base URL: [BASE_URL]

- The user flow being tested: [FLOW_DESCRIPTION]

### Requirements

1. Use TypeScript with full type annotations

2. Import { ai } from '@zerostep/playwright' for ALL element interactions

3. Use Page Object Model - create a page class if one does not exist

4. Generate random test data using @faker-js/faker

5. Include at least 3 assertions per test

6. Add screenshot capture at key checkpoints

7. Handle loading states with appropriate waits

8. Add JSDoc comments explaining the test purpose

### Output Format

- First: the page object file (if new)

- Second: the spec file with the test

- Third: any fixture/data files needed

### Anti-patterns to Avoid

- No hardcoded test data (use faker)

- No CSS or XPath selectors (use ai() calls)

- No sleep() calls (use proper waits)

- No shared state between test blocks

- No try-catch for expected failures (use expect)The key insight is specificity. Vague prompts produce vague tests. When you tell the AI exactly what patterns to follow and what to avoid, the output quality jumps dramatically.

5. Complete TypeScript Spec File: From Imports to Assertions

Let me show you a complete, production-ready spec file that demonstrates every concept we have discussed. This is a checkout flow test for an e-commerce application.

First, the page object.

// tests/pages/CheckoutPage.ts

import { Page, expect } from '@playwright/test';

import { ai } from '@zerostep/playwright';

import { test } from '../fixtures/base';

/**

* Page Object for the Checkout flow.

* All element interactions use ZeroStep ai() for resilience.

*/

export class CheckoutPage {

constructor(private page: Page, private test: any) {}

async navigateToCart(): Promise<void> {

await this.page.goto('/cart');

await this.page.waitForLoadState('networkidle');

}

async fillShippingAddress(address: {

firstName: string;

lastName: string;

email: string;

street: string;

city: string;

zip: string;

country: string;

}): Promise<void> {

await ai(`Fill the first name field with ${address.firstName}`, {

page: this.page,

test: this.test,

});

await ai(`Fill the last name field with ${address.lastName}`, {

page: this.page,

test: this.test,

});

await ai(`Fill the email field with ${address.email}`, {

page: this.page,

test: this.test,

});

await ai(`Fill the street address field with ${address.street}`, {

page: this.page,

test: this.test,

});

await ai(`Fill the city field with ${address.city}`, {

page: this.page,

test: this.test,

});

await ai(`Fill the zip or postal code field with ${address.zip}`, {

page: this.page,

test: this.test,

});

await ai(`Select ${address.country} from the country dropdown`, {

page: this.page,

test: this.test,

});

}

async selectShippingMethod(method: string): Promise<void> {

await ai(`Select the ${method} shipping option`, {

page: this.page,

test: this.test,

});

}

async proceedToPayment(): Promise<void> {

await ai('Click the Continue to Payment button', {

page: this.page,

test: this.test,

});

await this.page.waitForLoadState('networkidle');

}

async fillPaymentDetails(card: {

number: string;

expiry: string;

cvv: string;

name: string;

}): Promise<void> {

await ai(`Fill the card number field with ${card.number}`, {

page: this.page,

test: this.test,

});

await ai(`Fill the expiry date field with ${card.expiry}`, {

page: this.page,

test: this.test,

});

await ai(`Fill the CVV field with ${card.cvv}`, {

page: this.page,

test: this.test,

});

await ai(`Fill the cardholder name field with ${card.name}`, {

page: this.page,

test: this.test,

});

}

async placeOrder(): Promise<void> {

await ai('Click the Place Order button', {

page: this.page,

test: this.test,

});

await this.page.waitForLoadState('networkidle');

}

async getOrderConfirmationNumber(): Promise<string> {

const text = await ai('Get the order confirmation number text', {

page: this.page,

test: this.test,

});

return text as string;

}

async verifyOrderSummaryVisible(): Promise<void> {

const summary = await ai('Check if the order summary section is visible', {

page: this.page,

test: this.test,

});

expect(summary).toBeTruthy();

}

}Now the actual spec file.

// tests/e2e/checkout.spec.ts

import { expect } from '@playwright/test';

import { test } from '../fixtures/base';

import { ai } from '@zerostep/playwright';

import { faker } from '@faker-js/faker';

import { CheckoutPage } from '../pages/CheckoutPage';

/**

* E2E Test Suite: Checkout Flow

*

* Tests the complete purchase journey from cart to order confirmation.

* Uses ZeroStep AI selectors for resilience against UI changes.

* All test data is randomly generated per run to avoid data collisions.

*/

test.describe('Checkout Flow', () => {

let checkoutPage: CheckoutPage;

// Random test data generated fresh for each test run

const shippingAddress = {

firstName: faker.person.firstName(),

lastName: faker.person.lastName(),

email: faker.internet.email(),

street: faker.location.streetAddress(),

city: faker.location.city(),

zip: faker.location.zipCode(),

country: 'United States',

};

const paymentCard = {

number: '4111111111111111', // Stripe test card

expiry: '12/28',

cvv: '123',

name: `${shippingAddress.firstName} ${shippingAddress.lastName}`,

};

test.beforeEach(async ({ page }, testInfo) => {

checkoutPage = new CheckoutPage(page, test);

// Add a product to cart before each checkout test

await page.goto('/products');

await page.waitForLoadState('networkidle');

await ai('Click the Add to Cart button on the first product', {

page,

test,

});

await page.waitForTimeout(1000); // Wait for cart animation

});

test('should complete checkout with valid shipping and payment', async ({

page,

}) => {

// Step 1: Navigate to cart

await checkoutPage.navigateToCart();

await expect(page).toHaveURL(/.*\/cart/);

await page.screenshot({ path: 'screenshots/checkout-cart.png' });

// Step 2: Fill shipping address with random data

await ai('Click the Proceed to Checkout button', { page, test });

await page.waitForLoadState('networkidle');

await checkoutPage.fillShippingAddress(shippingAddress);

await page.screenshot({ path: 'screenshots/checkout-shipping.png' });

// Assertion: Verify shipping form accepted the data

await checkoutPage.verifyOrderSummaryVisible();

// Step 3: Select shipping method

await checkoutPage.selectShippingMethod('Standard');

await checkoutPage.proceedToPayment();

// Step 4: Fill payment details

await checkoutPage.fillPaymentDetails(paymentCard);

await page.screenshot({ path: 'screenshots/checkout-payment.png' });

// Step 5: Place the order

await checkoutPage.placeOrder();

// Assertion: Verify order confirmation page

await expect(page).toHaveURL(/.*\/order-confirmation/);

const confirmationText = await page.textContent('body');

expect(confirmationText).toContain('Thank you');

// Assertion: Verify order number is generated

const orderNumber =

await checkoutPage.getOrderConfirmationNumber();

expect(orderNumber).toBeTruthy();

expect(orderNumber!.length).toBeGreaterThan(0);

await page.screenshot({

path: 'screenshots/checkout-confirmation.png',

});

console.log(

`Order placed successfully: ${orderNumber} for ${shippingAddress.email}`

);

});

test('should show validation errors for empty required fields', async ({

page,

}) => {

await checkoutPage.navigateToCart();

await ai('Click the Proceed to Checkout button', { page, test });

await page.waitForLoadState('networkidle');

// Try to proceed without filling any fields

await ai('Click the Continue to Payment button', { page, test });

// Assertion: Verify validation errors appear

const errorMessages = await ai(

'Get all visible validation error messages',

{ page, test }

);

expect(errorMessages).toBeTruthy();

// Assertion: Page should still be on shipping step

await expect(page).toHaveURL(/.*\/checkout/);

// Assertion: Specific field errors should be visible

const pageContent = await page.textContent('body');

expect(pageContent).toMatch(

/required|please fill|cannot be empty/i

);

await page.screenshot({

path: 'screenshots/checkout-validation-errors.png',

});

});

test('should apply discount code and update total', async ({

page,

}) => {

const discountCode = 'TESTDISCOUNT20';

await checkoutPage.navigateToCart();

// Apply discount code

await ai(`Type ${discountCode} in the discount code field`, {

page,

test,

});

await ai('Click the Apply Discount button', { page, test });

await page.waitForLoadState('networkidle');

// Assertion: Discount should be reflected

const discountText = await ai(

'Get the discount amount text from the order summary',

{ page, test }

);

expect(discountText).toBeTruthy();

// Assertion: Total should be less than subtotal

const subtotalText = await ai('Get the subtotal amount', {

page,

test,

});

const totalText = await ai('Get the total amount', {

page,

test,

});

expect(subtotalText).toBeTruthy();

expect(totalText).toBeTruthy();

await page.screenshot({

path: 'screenshots/checkout-discount-applied.png',

});

});

});Notice several key patterns in this spec file. Every piece of test data is randomly generated using faker.js. No test depends on another test’s state. Every test captures screenshots at critical checkpoints. And every single element interaction goes through ZeroStep’s ai() function instead of brittle selectors.

6. GitHub Actions CI/CD: Full Pipeline Configuration

A framework without CI/CD is just a local experiment. Here is the complete GitHub Actions workflow that runs your E2E suite on every push, uploads artifacts, and deploys the HTML report to GitHub Pages.

# .github/workflows/e2e-tests.yml

name: E2E Tests - Playwright + ZeroStep

on:

push:

branches: [main, develop, 'feature/**']

pull_request:

branches: [main]

# Cancel in-progress runs for the same branch

concurrency:

group: e2e-${{ github.ref }}

cancel-in-progress: true

env:

ZEROSTEP_TOKEN: ${{ secrets.ZEROSTEP_TOKEN }}

BASE_URL: ${{ vars.BASE_URL || 'https://staging.yourapp.com' }}

jobs:

e2e-tests:

name: Run E2E Suite

runs-on: ubuntu-latest

timeout-minutes: 30

strategy:

fail-fast: false

matrix:

shard: [1, 2, 3]

steps:

- name: Checkout repository

uses: actions/checkout@v4

- name: Setup Node.js 20

uses: actions/setup-node@v4

with:

node-version: 20

cache: 'npm'

- name: Install dependencies

run: npm ci

- name: Install Playwright browsers

run: npx playwright install --with-deps chromium

- name: Run E2E tests (shard ${{ matrix.shard }}/3)

run: |

npx playwright test \

--shard=${{ matrix.shard }}/3 \

--retries=2 \

--reporter=html,json,github

env:

CI: true

PLAYWRIGHT_HTML_REPORT: playwright-report-${{ matrix.shard }}

PLAYWRIGHT_JSON_OUTPUT_NAME: results-${{ matrix.shard }}.json

- name: Upload HTML report

if: always()

uses: actions/upload-artifact@v4

with:

name: e2e-report-shard-${{ matrix.shard }}

path: playwright-report-${{ matrix.shard }}/

retention-days: 14

- name: Upload test results JSON

if: always()

uses: actions/upload-artifact@v4

with:

name: e2e-results-shard-${{ matrix.shard }}

path: results-${{ matrix.shard }}.json

retention-days: 14

- name: Upload screenshots

if: always()

uses: actions/upload-artifact@v4

with:

name: e2e-screenshots-shard-${{ matrix.shard }}

path: screenshots/

retention-days: 14

- name: Upload videos

if: failure()

uses: actions/upload-artifact@v4

with:

name: e2e-videos-shard-${{ matrix.shard }}

path: test-results/**/video/

retention-days: 7

merge-reports:

name: Merge and Deploy Report

needs: e2e-tests

if: always()

runs-on: ubuntu-latest

steps:

- name: Checkout repository

uses: actions/checkout@v4

- name: Setup Node.js 20

uses: actions/setup-node@v4

with:

node-version: 20

cache: 'npm'

- name: Install dependencies

run: npm ci

- name: Download all shard reports

uses: actions/download-artifact@v4

with:

pattern: e2e-report-shard-*

path: all-reports/

- name: Merge HTML reports

run: npx playwright merge-reports --reporter=html all-reports/

- name: Deploy report to GitHub Pages

if: github.ref == 'refs/heads/main'

uses: peaceiris/actions-gh-pages@v3

with:

github_token: ${{ secrets.GITHUB_TOKEN }}

publish_dir: playwright-report/

destination_dir: e2e-report

- name: Upload merged report

if: always()

uses: actions/upload-artifact@v4

with:

name: e2e-full-report

path: playwright-report/

retention-days: 30

- name: Post results summary

if: always()

run: |

echo "## E2E Test Results" >> $GITHUB_STEP_SUMMARY

echo "" >> $GITHUB_STEP_SUMMARY

echo "| Metric | Value |" >> $GITHUB_STEP_SUMMARY

echo "|--------|-------|" >> $GITHUB_STEP_SUMMARY

echo "| Branch | ${{ github.ref_name }} |" >> $GITHUB_STEP_SUMMARY

echo "| Commit | ${{ github.sha }} |" >> $GITHUB_STEP_SUMMARY

echo "| Triggered by | ${{ github.actor }} |" >> $GITHUB_STEP_SUMMARY

echo "" >> $GITHUB_STEP_SUMMARY

echo "View the full report in the artifacts above." >> $GITHUB_STEP_SUMMARYLet me break down the key design decisions in this pipeline.

Sharding across 3 workers. If you have 60 tests, each shard runs roughly 20 tests in parallel. This cuts your CI time by about 60 percent compared to running everything sequentially.

Concurrency control. The concurrency block ensures that if you push twice in quick succession, the first run gets canceled. No wasted compute, no confusing duplicate reports.

Retry on flaky tests. The --retries=2 flag tells Playwright to retry any failing test up to two times before marking it as failed. This is critical because AI-resolved selectors occasionally take a beat longer on the first attempt. A retry handles transient timing issues without masking real failures.

Videos only on failure. Screenshots upload on every run (they are small and useful for auditing), but videos only upload when a test fails. This keeps your artifact storage manageable.

GitHub Pages deployment. On every merge to main, the merged HTML report gets deployed to GitHub Pages. Your entire team can view the latest test results at https://yourorg.github.io/yourrepo/e2e-report/ without downloading artifacts.

7. Key Design Decisions That Make the Framework Production-Ready

Building a framework that works locally is easy. Building one that works reliably in CI, across teams, for months without constant maintenance — that is where design decisions matter. Here are the ones that separate Rohit’s approach from a weekend project.

Auth Session Reuse

Logging in before every test is the single biggest source of slowness and flakiness in E2E suites. The solution is to log in once, save the browser session to a file, and reuse it across all tests.

// global-setup.ts

import { chromium, FullConfig } from '@playwright/test';

import { ai } from '@zerostep/playwright';

async function globalSetup(config: FullConfig): Promise<void> {

const browser = await chromium.launch();

const context = await browser.newContext();

const page = await context.newPage();

// Login once using AI selectors

await page.goto(process.env.BASE_URL + '/login');

await ai('Fill the email field with testuser@company.com', { page });

await ai('Fill the password field with TestPass123!', { page });

await ai('Click the Sign In button', { page });

// Wait for successful login

await page.waitForURL('**/dashboard');

// Save the authenticated session

await context.storageState({ path: '.auth/session.json' });

await browser.close();

}

export default globalSetup;Conditional Steps for Different Environments

// tests/fixtures/base.ts

import { test as base } from '@playwright/test';

export const test = base.extend({

// Automatically skip tests tagged @staging when running on prod

page: async ({ page }, use, testInfo) => {

const isProduction = process.env.BASE_URL?.includes('prod');

const hasStagingTag = testInfo.tags.includes('@staging');

if (isProduction && hasStagingTag) {

testInfo.skip(true, 'Skipping staging-only test in production');

}

// Set default timeout based on environment

const timeout = isProduction ? 45000 : 30000;

page.setDefaultTimeout(timeout);

await use(page);

},

});Random Test Data Strategy

Hardcoded test data causes collisions in shared environments and masks bugs that only appear with certain inputs. Every test should generate its own data.

// tests/fixtures/testDataFactory.ts

import { faker } from '@faker-js/faker';

export class TestDataFactory {

static createUser() {

return {

firstName: faker.person.firstName(),

lastName: faker.person.lastName(),

email: faker.internet.email({ provider: 'testmail.com' }),

phone: faker.phone.number(),

password: faker.internet.password({ length: 12, memorable: true }),

};

}

static createAddress() {

return {

street: faker.location.streetAddress(),

city: faker.location.city(),

state: faker.location.state(),

zip: faker.location.zipCode(),

country: 'United States',

};

}

static createProduct() {

return {

name: faker.commerce.productName(),

price: parseFloat(faker.commerce.price({ min: 10, max: 500 })),

quantity: faker.number.int({ min: 1, max: 5 }),

description: faker.commerce.productDescription(),

};

}

static createOrder() {

return {

user: this.createUser(),

address: this.createAddress(),

products: Array.from(

{ length: faker.number.int({ min: 1, max: 3 }) },

() => this.createProduct()

),

notes: faker.lorem.sentence(),

};

}

}Auto-Retry Configuration

// playwright.config.ts

import { defineConfig, devices } from '@playwright/test';

export default defineConfig({

testDir: './tests/e2e',

fullyParallel: true,

forbidOnly: !!process.env.CI,

retries: process.env.CI ? 2 : 0,

workers: process.env.CI ? 3 : undefined,

reporter: process.env.CI

? [['html'], ['json', { outputFile: 'results.json' }], ['github']]

: [['html'], ['line']],

use: {

baseURL: process.env.BASE_URL || 'http://localhost:3000',

trace: 'on-first-retry',

screenshot: 'on',

video: 'retain-on-failure',

storageState: '.auth/session.json',

actionTimeout: 10000,

navigationTimeout: 30000,

},

globalSetup: require.resolve('./global-setup'),

projects: [

{

name: 'chromium',

use: { ...devices['Desktop Chrome'] },

},

{

name: 'mobile-chrome',

use: { ...devices['Pixel 7'] },

},

],

});The trace: 'on-first-retry' setting is particularly clever. It means Playwright records a full trace (DOM snapshots, network requests, console logs) only when a test fails and gets retried. This gives you rich debugging data for flaky tests without the storage overhead of tracing every successful run.

8. Framework Folder Structure

A clean folder structure is what separates a maintainable framework from a test graveyard. Here is the structure that Rohit’s team settled on after three iterations.

project-root/

├── .auth/

│ └── session.json # Gitignored - stored auth session

├── .github/

│ └── workflows/

│ └── e2e-tests.yml # CI/CD pipeline

├── tests/

│ ├── e2e/

│ │ ├── checkout.spec.ts # E2E spec files grouped by feature

│ │ ├── search.spec.ts

│ │ ├── user-profile.spec.ts

│ │ └── admin-dashboard.spec.ts

│ ├── pages/

│ │ ├── CheckoutPage.ts # Page objects with ai() methods

│ │ ├── SearchPage.ts

│ │ ├── ProfilePage.ts

│ │ └── AdminPage.ts

│ ├── fixtures/

│ │ ├── base.ts # Extended test fixture with env logic

│ │ └── testDataFactory.ts # Random data generators

│ └── utils/

│ ├── apiHelper.ts # Direct API calls for test setup

│ └── waitHelpers.ts # Custom wait conditions

├── screenshots/ # Auto-captured during tests

├── test-results/ # Playwright output (gitignored)

├── playwright-report/ # HTML report (gitignored)

├── CLAUDE.md # AI conventions file

├── playwright.config.ts # Playwright configuration

├── global-setup.ts # Auth and environment setup

├── package.json

└── tsconfig.jsonKey things to notice. Spec files are organized by feature, not by page or component. Page objects live in their own directory. Fixtures and utilities are separate. And the CLAUDE.md file sits at the root where every AI tool can find it.

This structure is consistent with the patterns I described in my series on building real automation frameworks with AI. If you want the full walkthrough of how to arrive at this structure iteratively, check out Part 1: Vibe Coding an Automation Framework and Part 2: Building with Cursor AI.

9. The $0 AI Layer Breakdown

This is the part that gets the most skepticism, so let me be extremely specific about what “zero cost” means and what tools make it possible.

| Tool | What It Does in the Framework | Free Tier Details | Monthly Cost |

|---|---|---|---|

| Claude.ai (Free Tier) | Generates test code from prompts, reviews test logic, suggests assertions | Generous daily message limit, access to Claude 3.5 Sonnet | $0 |

| GitHub Copilot (Free Tier) | Inline code completion while writing tests, autocompletes ai() calls and assertions | 2,000 code completions/month, 50 chat messages/month | $0 |

| ChatGPT (Free Tier) | Second opinion on complex test scenarios, helps debug CI failures | Access to GPT-4o mini, limited GPT-4o | $0 |

| ZeroStep | AI element resolution at runtime | Free for open-source, free tier for private repos (1,000 AI steps/month) | $0 |

| Playwright | Test runner, browser automation, reporting | Fully open source, no usage limits | $0 |

| GitHub Actions | CI/CD pipeline execution | 2,000 minutes/month for free repos, 500 for private | $0 |

Total monthly cost: $0.

Now, let me be honest about the limitations. The free tiers have usage caps. If your team has ten SDETs all generating tests simultaneously, you might hit Claude’s daily limit or Copilot’s monthly completion cap. For a team of two to four people, the free tiers are more than sufficient. For larger teams, you are probably looking at $20 per month per person for Claude Pro or Copilot Pro — which is still orders of magnitude cheaper than enterprise testing platforms that charge $500 to $2,000 per seat per month.

The critical insight is this: the AI layer is used for test creation and maintenance, not for test execution. ZeroStep’s runtime AI resolution uses your own API key, and the free tier gives you 1,000 AI steps per month. A typical E2E suite of 50 tests with 10 AI interactions each means 500 AI steps per run. That gives you two full suite runs per month on the free tier, which is enough for development. In CI, you can fall back to data-testid selectors for high-frequency runs and reserve AI resolution for your nightly comprehensive suite.

10. Results Benchmark: Before vs. After

Here are the actual numbers from Rohit’s team after three months of running this framework in production.

| Metric | Before (Selenium + Manual) | After (Playwright + ZeroStep + Claude) | Improvement |

|---|---|---|---|

| Time to write a new E2E test | 4-6 hours | 1-1.5 hours | ~70% faster |

| Weekly selector maintenance | 6-8 hours | 0.5-1 hour | ~88% reduction |

| Test flakiness rate | 18-22% | 3-5% | ~80% reduction |

| CI pipeline duration (60 tests) | 45 minutes | 12 minutes (sharded) | ~73% faster |

| Manual test runs before merging | 2-3 per PR | 0 | 100% eliminated |

| Time to onboard new SDET | 2-3 weeks | 3-5 days | ~70% faster |

| Tests per sprint (team of 6) | 15-20 new tests | 40-55 new tests | ~160% increase |

| Monthly AI tooling cost | $0 (no AI) | $0 (free tiers) | No change |

| Monthly testing platform cost | $3,200 (Selenium Grid) | $0 (GitHub Actions free tier) | $3,200 saved |

The most surprising number for me was the onboarding time. New SDETs joining the team could start writing tests on day three instead of week three because the AI handles the hardest part: figuring out how to interact with the UI. Instead of learning the application’s DOM structure, they just describe what they see on the screen.

The flakiness reduction from 18-22% down to 3-5% is also significant. Most of the remaining flakiness comes from genuine race conditions in the application (like a slow API response under load), not from selector brittleness. When your tests fail, they fail for real reasons, which means your team actually investigates failures instead of dismissing them as “just flaky tests.”

11. Getting Started: Step-by-Step Setup

Let me give you the quickest path from zero to a running framework.

# Step 1: Initialize a new Playwright project

npm init playwright@latest my-e2e-framework

cd my-e2e-framework

# Step 2: Install ZeroStep and faker

npm install @zerostep/playwright @faker-js/faker

# Step 3: Get your ZeroStep API key (free)

# Visit https://app.zerostep.com and sign up

# Add ZEROSTEP_TOKEN to your .env file

# Step 4: Create the folder structure

mkdir -p tests/{e2e,pages,fixtures,utils}

mkdir -p .auth screenshots

# Step 5: Add .auth to .gitignore

echo ".auth/" >> .gitignore

echo "test-results/" >> .gitignore

echo "playwright-report/" >> .gitignore

# Step 6: Create your CLAUDE.md

# Copy the template from Section 3 of this article

# Step 7: Run your first test

npx playwright testFrom here, use the prompt template from Section 4 with Claude.ai (free) to generate your first spec file. Paste your CLAUDE.md conventions into the conversation, describe the user flow you want to test, and watch it produce a working test with ZeroStep selectors, random data, and proper assertions.

12. Common Pitfalls and How to Avoid Them

After helping three teams adopt this framework, I have seen the same mistakes repeatedly. Here is how to avoid them.

Pitfall 1: Using ai() for everything, including simple assertions. ZeroStep’s ai() is meant for element interactions, not for checking if an element exists. For assertions, use Playwright’s built-in expect() locators. They are faster and more reliable for verification.

Pitfall 2: Not setting up auth session reuse. If every test logs in through the UI, you are adding 5-10 seconds per test and introducing a common failure point. Set up globalSetup from day one.

Pitfall 3: Generating all tests with AI without reviewing. AI-generated tests sometimes include redundant steps or miss edge cases. Always review and refine. The AI gets you 85 percent of the way there; the last 15 percent is your expertise.

Pitfall 4: Skipping the CLAUDE.md file. Without conventions, every AI session starts from scratch. Ten minutes of writing CLAUDE.md saves hours of editing AI output.

Pitfall 5: Running AI-resolved selectors on every CI run. Use ZeroStep in development and nightly runs. For every-push CI, use a mix of data-testid and ZeroStep to balance speed and coverage.

13. Frequently Asked Questions

Does ZeroStep work with Shadow DOM and iframe elements?

Yes. ZeroStep inspects the rendered DOM at the time of the ai() call, including Shadow DOM and accessible iframe content. For deeply nested iframes, you may need to switch context first using Playwright’s page.frameLocator(), then pass the frame as the page parameter. Shadow DOM works out of the box because ZeroStep operates at the accessibility tree level, not just the light DOM.

What happens when ZeroStep’s free tier runs out mid-pipeline?

ZeroStep returns a clear error when you exceed your monthly AI step quota. The test will fail with a descriptive message, not a cryptic timeout. The best strategy is to implement a fallback mechanism: try the ai() call first, and if it throws a quota error, fall back to a data-testid selector. This hybrid approach means your CI never fully blocks, even if you exhaust your free tier mid-month. Realistically, the free tier of 1,000 steps per month is enough for daily development and two to three full suite runs.

Can this framework handle visual regression testing?

Not directly. ZeroStep resolves element interactions, not visual comparisons. However, Playwright has built-in screenshot comparison via expect(page).toHaveScreenshot(), which you can combine with this framework. The workflow is: use ZeroStep for navigating and interacting with the UI, then use Playwright’s visual comparison for asserting that the result looks correct. This gives you behavioral E2E testing plus visual regression in the same suite.

How does this compare to Cypress with AI plugins?

Cypress has made progress with AI-assisted testing, but there are key differences. Playwright natively supports multiple browsers, multiple tabs, and true parallelism without workarounds. ZeroStep is purpose-built for Playwright’s architecture and leverages its accessibility tree directly. Cypress AI plugins often work by injecting scripts into the browser, which can cause conflicts with content security policies. In benchmarks, Playwright plus ZeroStep runs tests 2-3x faster than Cypress with comparable AI plugins because of Playwright’s CDP-based architecture versus Cypress’s proxy-based approach.

Is ZeroStep production-safe, or is it still experimental?

ZeroStep has been in production use since 2024 and is stable for test automation. It is important to understand that ZeroStep runs in your test environment, not in your production application. It never modifies your application code or injects anything into your production builds. The AI resolution happens server-side on ZeroStep’s infrastructure, and only DOM metadata (not screenshots or user data) is sent for resolution. For teams with strict data policies, ZeroStep offers a self-hosted option. As of early 2026, companies like Netlify and several YC-backed startups are using it in their CI pipelines daily.

14. Conclusion: The Framework That Pays for Itself on Day One

Let me bring this back to where we started. Rohit Mangla’s team of six SDETs was spending roughly 40 hours a week on test maintenance alone. Broken selectors, flaky CI runs, manual regression testing before every release. That is an entire person’s worth of labor just keeping the lights on.

After switching to this framework — Playwright for speed, ZeroStep for resilience, Claude for generation, GitHub Actions for automation — that maintenance number dropped to under 5 hours a week. The freed-up time went into writing new tests, improving coverage, and doing exploratory testing that actually finds bugs humans care about.

The $0 AI layer is not a gimmick. It is a reflection of where the industry is right now: the best AI tools offer generous free tiers because they are competing for adoption. Two years from now, some of these free tiers will shrink. But by then, your framework will be established, your team will be proficient, and upgrading to a paid tier will be a rounding error in your engineering budget.

Start this weekend. Set up Playwright. Install ZeroStep. Write your CLAUDE.md. Generate your first test with Claude. Push it through GitHub Actions. The entire setup takes less than a day, and the ROI starts on day one.

The era of brittle selectors and expensive testing platforms is over. The teams that move first will ship faster, break less, and spend their time on problems that actually matter.

If you want more on building automation frameworks with AI assistance, check out my complete two-part series: Part 1: Vibe Coding an Automation Framework covers the conceptual foundation, and Part 2: Building with Cursor AI walks through the implementation. Together with this article, you have everything you need to build a world-class E2E testing framework without spending a single dollar on AI tooling.